Image-based Personalised Models of Cardiac Electrophysiology for Ventricular Tachycardia Therapy Planning

1 Introduction

The muscular organ that beats in our chests has been recognised as vital by our ancestors long before the invention of writing. Home of the soul in the ancient Egyptian religion, object of interest for Greek philosophers, it took several centuries before its role (pumping blood, oxygen and nutrients throughout our bodies) stopped being debated. In the 21st century, it is the (almost) universal symbol of love; probably because of the very perceptible modifications of its pace when emotions overwhelm us, a consequence of its control by our autonomous nervous system.

This control is mediated by electrochemical reactions involving both the nervous and the cardiovascular system. Muscle cells, similarly to neurons, exhibit brief and localised but propagating perturbations of their transmembrane voltage, the action potentials. In the heart, the complex dynamics of this propagation are fundamental to its optimal1 contraction.

In this manuscript we will not directly focus on the pumping role of the heart, but rather on its electrical activity, i.e., on the signals that trigger its contraction.

1.1 Biomedical aspects

1.1.1 Cardiac Physiology

1.1.1.1 Pump function

Human hearts, like those of mammals and birds, are divided into four chambers. Two atria receive blood through the venous system and pump it into the ventricles through the mitral and tricuspid valves. Ventricles, in turn, eject it through the aortic and pulmonary valves to the rest of the body via the arterial network. Atria and ventricles are grouped two by two to form the right and left hearts. The former is responsible for pumping “used” blood to the lungs, where it releases carbon dioxide and fixes dioxygen. The latter pumps oxygen-rich blood to the organs that will use this gas as a source of energy.

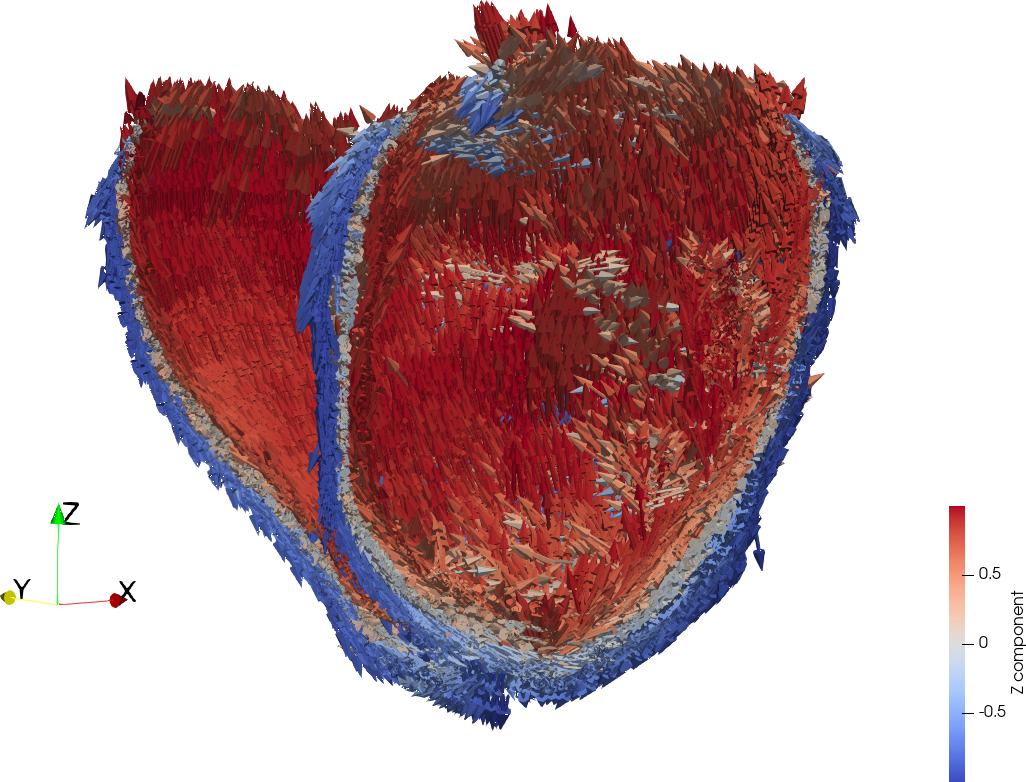

The heart contraction ensures an efficient expulsion of the blood as it shortens along axes determined by the fibre orientation (fig. 2) of the heart muscle.

1.1.1.2 Sinus rhythm

Unlike skeletal muscles, the heart contraction is not triggered by the release of neuro-transmitters in the neuromuscular junction. Under normal conditions, the harmonious, orderly, efficient contraction of the heart is initiated by a group of cells located in the right atrium: the sinus node (SN), sometimes referred to as our natural pacemaker. The role of the nervous system is here indirect: it modulates the activity of autonomous rhythmic heart cells that the sinus node is a part of.

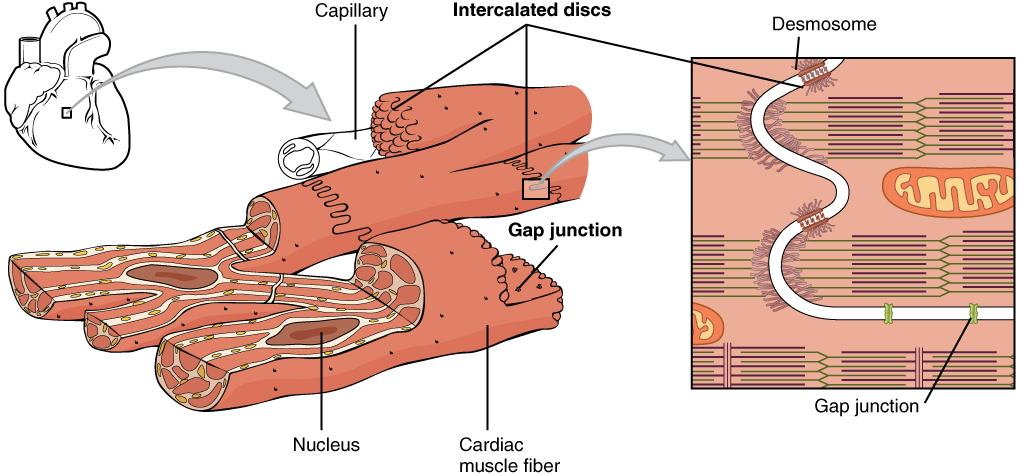

The contraction signal is mediated by a change of transmembrane potential (action potential) in the cardiac cells (the cardiomyocytes). This voltage change propagates throughout the whole heart tissue (the myocardium) via various structures among which the gap junctions, that connect adjacent cells’ cytoplasms to form a syncytium, play a major role.

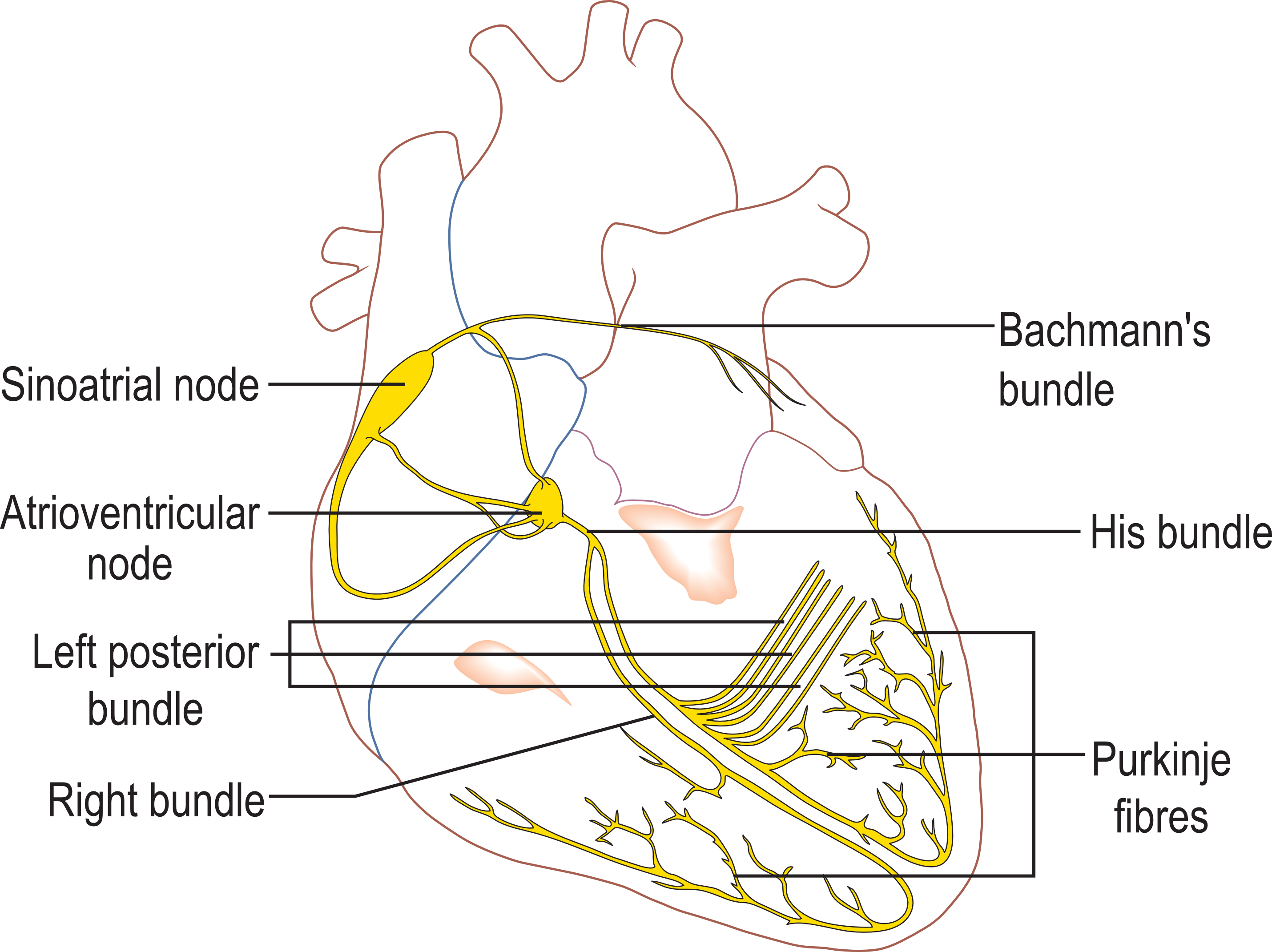

This propagation is faster in specialised groups of cells that constitute the conduction system of the heart illustrated in fig. 1. It follows preferred directions, guided by the arrangement of myocardial fibres, that reflects the underlying organisation of cells at the histological level. In a physiological setting, this excitation wave, initiated in the SN only, reaches each part of the myocardium only once.

Except in specific affections, the electrical communication between the atria and the ventricles is unidirectional and possible through a unique checkpoint: the atrio-ventricular node. This is why from now on in this manuscript, which focuses on abnormal electrical behaviours of the left ventricle, we will allow ourselves to barely mention the atria anymore, for which modelling has its own specificities and challenges (Dössel et al. 2012). This reductionism obviously comes with limitations, but “what is simple is always wrong; what is not is unusable.”2

1.1.1.3 Cardiac action potential

Because of differences in the concentrations of ions between the cytoplasm and the extra-cellular compartment, there is a measurable difference of electrical potential between the inside and the outside of the double lipidic cellular membrane: the cell resting potential, negative in the human ventricle cardiomyocytes. The AP mentioned in the previous section is a voltage-induced, localised, quick and propagating change of ions concentrations inside and outside the cardiac cell. Altough moderate, this change in concentration transiently reverses the polarity of the transmembrane potential (fig. 3). This change is due to the activation of specialised proteins: the ion channels and transporters. These ionophore proteins are located on the cytoplasmic and sarcoplasmic cell membranes, allowing ions to travel across these membranes either passively under the influence of electro-statical and osmotic forces (ion channels) or actively via energy consumption (ion transporters).

In the human ventricular myocyte, this AP is classically divided in 5 different phases, illustrated schematically in fig. 3. Phase 0 is a rapid depolarisation of the membrane mainly involving the opening of sodium and calcium channels.3 These positive ionic species, which concentration is low in the cytoplasm during the resting potential, quickly raise the transmembrane potential to a positive voltage. Phase 1 is a transient notch before the closing of sodium channels and the characteristic plateau of phase 2, where an inward flux of calcium ions is electrically balanced by an outward flux of potassium ions. The calcium ions, by binding to specific receptors on the protein machinery responsible for the contraction of cardiomyocytes, actually constitute the true messengers of the contraction signal at the sub-cellular level. Finally, phase 3 is the repolarisation phase where after the closing of the calcium channels, potassium channels repolarize the cell to its resting potential (phase 4).

It is important to mention here that the sodium channels involved in phase 0 are voltage-dependent: they only open if the membrane potential is raised above a certain threshold value. This initial raise in voltage is mediated by nearby ionic movements in the same cell or between cells through specific structures (gap junctions). Thus, the influence of a cell’s neighbourhood is what causes the propagation of the AP through the heart tissue.

From phase 1 to phase 3, no change in membrane potential can trigger another depolarisation, creating an absolute refractory period of the cardiomyocyte. The effective refractory period in fact extends to a certain duration during phase 4, where despite a membrane potential equal to the resting potential, no new excitation is possible. This can be seen as a “natural safety mechanism” to prevent constant re-excitation by a single depolarisation wave.

1.1.2 Infarct and arrhythmia

![Figure 4: Infarct. [A] overview of the coronary system; [B] cross-section of the coronary artery with details on the occlusion process. In this thesis, we prefer the term “infarct scar” over “dead heart muscle,” and differenciate various levels of scar/healthy cell combinations throughout the organ. (illustration from https://www.nhlbi.nih.gov/health-topics/heart-attack, public domain)](fig/infarct.png)

The pumping function of the heart is also responsible for transporting oxygen and nutrients to the heart itself via the arterial coronary system. When this process is disturbed by occlusions of vessels in this system (fig. 4), the cardiomyocytes activity is inefficient: this painful process is the acute phase of infarction. If the occlusion lasts, the myocardium suffers irreversible changes (chronic infarction): muscular cells are replaced by fibrotic tissue which contractility and electrochemical properties differ from healthy myocytes.

The past decades have seen dramatic improvements in survival rates of acute infarcts, but the permanent damages of the cardiac muscle are responsible for a large part of infarct-related mortality and morbidity in rich countries. These chronic affections include heart failure when they are related to the pumping function of the heart and arrhythmia when we talk about the pathological effects on the depolarisation wave conduction.

One particular infarct related arrhythmia is the re-entrant ventricular tachycardia (VT). In chronic infarct related VT, instead of “crashing” in the late repolarisation sites of the myocardium, a depolarisation wave re-excites (re-enters) parts of the myocardium that are out of their refractory period. The ventricle thus enters a self-sustained, disorganised, rapid and inefficient contraction of the heart, short-circuiting the physiological role of the SN. In the worst cases this leads to an even more disorganised and lethal arrhythmia: ventricular fibrillation.

1.1.3 VT therapy

Different therapeutic approaches are possible for ischaemic related VT. On one hand, a possible symptomatic treatment of VT relies on the implantation of an implantable cardioverter defibrillator (ICD) that detects arrhythmia and delivers electric shocks (cardioversion) to the patient when needed. On the other hand, the etiologic treatment for VT is the ablation, usually with radio frequencies (RFA), of the arrhythmogenic substrate, i.e., the electrical inactivation of the zones responsible for the re-entry. The details of the risks and challenges of this approach are detailed in the introductions of the articles reproduced in section 2.

During these procedures, the interventional cardiologist first records the electrical activity of the cardiomyocytes. For the work presented in this manuscript, we had access to such recordings for 10 different patients, that helped us build the major part of our scientific contributions.

It is worth mentioning here that cardiac radiology, and particularly computed tomography and magnetic resonance imaging, by providing insights on the topology of the ischaemic scar prior to entering the catheter lab, are very valuable for such interventions (Berruezo et al. 2015).

1.2 Cardiac electrophysiological models

1.2.1 At the cell level

The inspiration for mathematical models of action potential is the Hodgkin–Huxley model (Hodgkin and Huxley 1952) for which the authors were awarded the Nobel prize in 1962. This model aimed at reproducing the electrical behaviour of neurons of giant squids, by extensively describing the movements of ions across their membranes (neurons, like cardiomyocytes, exhibits action potentials when activated). A lot of models in the same spirit have specifically been developed for the description of action potentials of cardiomyocytes, and are regularly updated with new insights from molecular biology.

These cell-level models of cardiac electrophysiology (EP) can be divided in two subgroups:4

“Extensive” models: they incorporate detailed information on the behaviour of numerous ionic channels (Luo C H and Rudy Y 1991), even if they remain computationally tractable for whole organ simulations (Ten Tusscher et al. 2004). They can be specific to certain animal species (Mahajan et al. 2008) or to a cardiac cavity (Courtemanche, Ramirez, and Nattel 1998).

“Simplified” models (Aliev and Panfilov 1996; Mitchell and Schaeffer 2003; Gray and Franz 2020), which aim at reproducing the general changes of voltage over time and specific properties surrounding this change in cardiac cells. These models, which generally group several ionic movements together, follow the philosophy of the FitzHugh-Nagumo model (FitzHugh 1955), that was a phenomenological simplification of the Hodgkin–Huxley model (Hodgkin and Huxley 1952). In this manuscript, we will only use this second type of AP models (section 4).

1.2.2 Reaction-diffusion

In order to move from single cell to organ-level simulations of cardiac electrical activity, these models must be associated to a mathematical description of how the changes in voltage propagate from one “virtual cell”5 to the other.

The most accurate formulation of this behaviour is the bi-domain model. In this model, we consider the extra-cellular and intra-cellular spaces as different compartments separated by the cell membrane. Since the partial differential equations governing the bi-domain model are complex and the numerical schemes required to run simulations with them are computationally expensive, it is common to use a simplification called the mono-domain model. In the mono-domain model, we consider that the intra and extra cellular domains have equal anisotropy ratio. It can be formulated as:

\[\begin{cases} \chi \left( C_m \frac{\partial v}{\partial t} + I_{ion}(v, \eta ) \right) = \nabla \cdot (\sigma \nabla v) \\ \frac{\partial \eta}{\partial t} = f(v, \eta ) \end{cases} \](1)

Here, \(\chi\) is the surface-volume ratio; \(C_m\) the membrane capacitance; \(\sigma\) the conductivity; \(\frac{\partial v}{\partial t}\) the rate of change of \(v\) (the transmembrane potential) with time; \(I_{ion}\) represents the total ion current, a function of \(v\) and a vector of state variables \(\eta\). \(\eta\) represents one of the cell-level models evoked in the previous section and is a function of \(v\) and other variables specific to the chosen ionic model.

This type of model has been successfully used to model re-entrant VT (Lopez-Perez et al. 2019; Relan, Chinchapatnam, et al. 2011). But even in this simplified formulation, its parameterisation is challenging, because most of the parameters are inaccessible to in vivo exploration in human subjects. We will try to address this problematic in section 3 of this thesis.

1.2.3 Eikonal models

Going further down the simplification path, Eikonal models of cardiac electrophysiology form a distinct category, that we investigate in section 2. These categories of models ignore, at least partly, the complexity of the dynamics of voltage changes in the cardiac membrane. They make the assumption that the propagation of the APs in the myocardium can be described as a wave. This makes them a lot easier to parameterise, and extremely fast to compute, at the cost of losing the possibility to simulate complex phenomena although many variants have been proposed to overcome this limitation (Pernod et al. 2011).

1.2.4 Goals of cardiac modelling

There are different reasons why one would want to model cardiac electrical activity.

Models and simulations can help understanding the conditions of a known, experimentally observed phenomenon (Seemann et al. 2006). This approach mostly lies in the category of basic science; for instance, one could wonder which ion channels are responsible for specific changes in phases of the AP, or what conduction speeds are necessary to observe a certain phenomenon at a larger scale.

On the other hand, models can also be used for their predictive power; an approach that is already actively used for drug design (Tomek et al. 2020). The work presented in this manuscript also takes advantage of model predictions, and belongs to the field of patient-specific model personalisation.

1.2.5 Patient-specific models

Adapting model parameters to a specific patient is part of the general field of personalised medicine (Corral-Acero et al. 2020) and can be useful for both prognosis refining and therapy planning (Trayanova, Doshi, and Prakosa 2020).

For pump-function related affections, mechanical models generally have to take into account the circulatory system; yet successes in the personalisation process are found in the scientific literature (Molléro et al. 2018). Concerning EP model personalisation, it can be relevant to consider the isolated heart, because of its (relative) electrical isolation when we consider depolarisation wave propagation dynamics; this is our approach in this thesis.

The electrocardiogram imaging (ECGi) inverse problem can be seen as a model personalisation problem. Its goal is to recover, non-invasively, the activation sequence of the myocardium (similar to the activation maps obtained by interventional cardiologists during RFA procedures) from body surface electrode potentials. Due to the ill-posedness nature of the ECGi problem, priors in the form of EP models have been shown to vastly improve the results, notably when theses models incorporate patient-specific imaging data as part of the personalisation process (Giffard-Roisin, Jackson, et al. 2016).

Our work is related to this approach: we set out to establish a patient-specific EP model personalisation framework, based on patient data acquired non-invasively, usable in the context of ischaemic VT. Unlike previous related studies (Relan, Chinchapatnam, et al. 2011; Relan, Pop, et al. 2011), invasively acquired patient data will not be used directly in the personalisation process, but will allow us to investigate how cardiac computed tomography (CT) features can be translated into EP properties.

1.3 Main contributions

Our main contributions, presented throughout this manuscript are the following:

an image-based Eikonal model personalisation framework using myocardial wall thickness to adjust conduction velocity and able to reproduce periodic activation maps (section 2.1),

a deep learning approach to segment the myocardial wall on high resolution three-dimensional CT images with an accuracy within inter-expert variability (section 2.3.2.1),

signal processing algorithms tailored for the detection of the challenging repolarisation wave on intra-cardiac unipolar electrograms (section 3.2),

a relationship between the thickness of the myocardium and its repolarisation properties (section 3.4.2),

an image-based mono-domain personalisation framework using myocardial wall thickness for personalisation of conduction velocity and restitution (section 4.3).

1.4 Manuscript organisation

In section 2, we present different contributions for Eikonal model personalisation, that also cover image processing algorithms related to the personalisation process and an imaging/electroanatomical maps registration process used to compare simulations and clinical EP data. We then reproduced integral versions of our previously published peer-reviewed publications.

In section 3, we extract repolarisation features from intra-cardiac unipolar electrograms and link these features to the myocardial wall thickness. As is the case throughout this thesis, scar assessment is based on the left ventricle wall thickness on CT images, therefore in the case of chronic myocardial infarction.

Finally, in section 4, making good use of the results obtained in section 3, we focus on a mono-domain model of cardiac EP. We show that the repolarisation features obtained in the previous chapter are able to reproduce arrhythmia induction protocols used by interventional cardiologists in the context of post-infarct VT.

2 Eikonal modelling

Eikonal models usually output activation maps, i.e., local activation times (LAT) associated to coordinates in space; in most Eikonal variants, this map represents only one cycle of activation of the myocardium. Interventional cardiologists are familiar with this type of maps, because based on the hypothesis of activation periodicity, most EP lab terminals reconstruct the activation map of a whole cardiac cavity by aggregating and synchronising data acquired during different cycles. This is necessary because many exploration catheters can only acquire data on a small part of the myocardium at each cycle. During this recording, the clinician needs to move the catheter inside the cavity during numerous consecutive cycles to recreate an activation map of the whole cavity during one cycle. This explains the surprising geometries and in part at least, the presence of seemingly aberrant LATs found in clinical activation maps.

In its simplest form (eq. 2), the Eikonal model only requires a heart geometry, a propagation velocity for each simulation element, and starting point(s) for the depolarisation wave initiation. The simulations are very fast to compute thanks to the fast marching method (Sermesant et al. 2005), making them suited for the development of interactive tools (Pernod et al. 2011) and compatible with clinical time frames (Neic et al. 2017).

Eikonal models of cardiac EP have many limitations for which different solutions have been proposed, for instance to address the anisotropic nature of the AP propagation in the myocardium due to myocardial fibre orientation and/or the front curvature effect (Keener 1991; Jacquemet 2012). Using a multi-front formulation (Pernod et al. 2011; Konukoglu et al. 2011), it is also possible to model more than a single activation cycle.

In this thesis, we mostly used the simplest formulation of the Eikonal model with the exception of our participation in a modelling challenge presented in section 2.4.

In this chapter, we reproduced integral versions of our publications in peer-reviewed conferences (section 2.1, 2.3 and 2.4) and journal (section 2.2). They all concern the Eikonal model of cardiac EP.

Our first publication (VT Scan: Towards an Efficient Pipeline from Computed Tomography Images to Ventricular Tachycardia Ablation, section 2.1) must be seen as a first attempt to use CT imaging as a support for model personalisation in the context of ischaemic re-entrant VT. While we do not introduce any major modification to the formulation of the Eikonal model, we propose direct ways to personalise it based on wall thickness and to reproduce real intra-cardiac recordings of re-entrant VT by imposing a refractory plane to obtain a unidirectional block. By giving a null conduction speed on a plane behind the starting point of the depolarisation and orthogonal to the initial propagation direction, we model the unidirectional propagation pattern typically found in post-infarct VT.

Using this formulation, we present visual results in section 2.1.5 that aims to convince the reader about the pertinence of cardiac CT imaging as a support for patient-specific simulations.

Our contributions in this article are:

a relationship between CT LV wall thickness and depolarisation wave front apparent propagation speed (section 2.1.3.2),

a way to model refractoriness in Eikonal models for unidirectional blocks (section 2.1.3).

In section 2.2, our journal article (Fast Personalised Electrophysiological Models from CT Images for Ventricular Tachycardia Ablation Planning) incorporates the results of the previous conference article, and extends it in various ways.

First, the article reflects the fact that between the two articles we could retrieve more EP data coupled with CT imaging. This allowed to test our personalisation process for activation maps of controlled pacing and not just VT.

Secondly, in an attempt to automate the VT isthmuses detection on CT images, we introduce a channelness filter, purely based on imaging features.

Thirdly, it briefly describes a multi-modal registration framework based on spherical coordinates that eased the comparisons between CT-based simulations and EP data in our work. These spherical coordinates are in the spirit of the universal ventricular coordinates (Bayer et al. 2018) proposed by others during the same year. This framework was also used in comparative studies between different imaging modalities (Nuñez-Garcia, Cedilnik, Jia, Cochet, et al. 2020; Magat et al. 2020) in collaboration with scientists and clinicians from the Bordeaux University.

Our contributions in this article are:

a registration framework for multi-modal data integration (section 2.2.2.7),

an image processing algorithm to identify potential VT isthmuses on CT images (section 2.2.2.6),

a validation of the thickness/speed relationship on controlled pacing electro-anatomical maps (section 2.2.3.3).

Since our goal is to establish a clinically usable model personalisation framework in the short term, and since this framework relies on CT imaging segmentation, it made sense for us to explore automated segmentation strategies in the conference article presented in section 2.3 (Fully Automated Electrophysiological Model Personalisation Framework from CT Imaging). In this section we developed deep learning methods for left ventricular wall segmentation in CT images, with some specific adaptations. Besides the usual segmentations scores (Dice score, Hausdorff distance) used to evaluate this task, knowing that our personalisation methodology relies on LV wall thickness assessment, we evaluated the impact of such image processing approach on this feature. The segmentation network presented in this section was also used in imaging related publications resulting from our collaboration with researchers and clinicians from the Bordeaux Hospital (Nuñez-Garcia, Cedilnik, Jia, Sermesant, et al. 2020; Nuñez-Garcia, Cedilnik, Jia, Cochet, et al. 2020). Unlike the previously proposed model-based approach for this task (Ecabert et al. 2008), our segmentation methodology is purely data-driven.

This article also introduces a way to simulate unipolar electrograms and body surface potentials from activation maps, such as those produced by but not limited to the Eikonal model. Several extensions of the Eikonal model were proposed to simulate ECGs, for instance Pezzuto et al. (2017), here we directly estimate a local dipole and compute the local electrical field using the dipole formulation detailed by Giffard-Roisin, Jackson, et al. (2016). This electrogram rapid simulations framework was also used in a novel formulation of the ECGi inverse problem (Bacoyannis et al. 2019).

Our contributions in this article are:

a deep learning approach to segment the myocardial wall on high resolution three-dimensional CT images (section 2.3.2.1),

an electrogram simulation model formulation by combining the Eikonal model with an ionic model and the dipole formulation (section 2.3.3.4).

In section 2.4 (Eikonal Model Personalisation Using Invasive Data to Predict Cardiac Resynchronisation Therapy Electrophysiological Response), we applied our cardiac EP model personalisation approach to cardiac resynchronisation therapy (CRT) response.

Contrarily to the rest of our work, model personalisation here is based on invasive data. However, this invasive data, intracardiac recordings of electric activity, is of the same nature as the data we used elsewhere as our support for model calibration and validation. Exploitation of such data is not trivial, notably for reasons evoked in this chapter introduction, and working with this EP data helped us apprehend our main research focus with a sharpened eye. More precisely, we here addressed the question of precisely detecting the depolarisation onsets and deriving wave front propagation speed from such data.

We also illustrate in this section some of the possible variations of Eikonal models that we mentioned earlier.

Our contributions in this article are:

a methodology to identify initial depolarisation sites on incomplete intra-cardiac EP data (section 2.4.4),

an Eikonal model-based approach to identify apparent propagation speed on intra-cardiac electro-anatomical maps (section 2.4.2.2).

Cedilnik, N., Duchateau, J., Dubois, R., Jaïs, P., Cochet, H., and Sermesant, M. Functional Imaging and Modelling of the Heartfimh2017

2.1 VT scan

2.1.1 Introduction

Sudden cardiac death (SCD) due to ventricular arrhythmia is responsible for hundreds of thousands of deaths each year (Mozaffarian et al. 2016). A high proportion of these arrhythmias are related to ischemic cardiomyopathy, which promotes both ventricular fibrillation and ventricular tachycardia (VT). The cornerstone of SCD prevention in an individual at risk is the use of an implantable cardioverter defibrillator (ICD). ICDs reduce mortality, but recurrent VT in recipients are a source of important morbidity. VT causes syncope, heart failure, painful shocks and repetitive ICD interventions reducing device lifespan. In a small number of cases called arrhythmic storms, the arrhythmia burden is such that the ICD can be insufficient to avoid arrhythmic death.

In this context, radio-frequency ablation aiming at eliminating re-entry circuits responsible for VT has emerged as an interesting option. VT ablation has already demonstrated its benefits (Ghanbari et al. 2014) but still lacks clinical consensus on optimal ablation strategy (Aliot et al. 2009). Two classical strategies have been developped by electrophysiologists. The first strategy focuses on mapping the arrhythmia circuit by inducing VT before ablating the critical isthmus. The second strategy focuses on the substrate, eliminating all abnormal potentials that may contain such isthmuses.

Both strategies are very time-consuming, with long mapping and ablation phases respectively, and procedures are therefore often incomplete in patients with poor general condition. Many authors have thus shown an interest in developing methods coupling non-invasive exploration and modelling to predict both VT risk and optimal ablation targets (Ashikaga et al. 2013; H. Arevalo et al. 2013; Rocio Cabrera-Lozoya et al. 2014; Chen et al. 2016; Wang et al. 2016). Most of the published work in this area relies on cardiac magnetic resonance imaging (CMR), at the moment considered as the gold standard to assess myocardial scar, in particular using late gadolinium enhancement sequences. However, despite being the reference method to detect fibrosis infiltration in healthy tissue, CMR methods clinically available still lack spatial resolution under 2 mm to accurately assess scar heterogeneity in chronic healed myocardial infarction, because the latter is associated with severe wall thinning (down to 1 mm). Moreover, most patients recruited for VT ablation cannot undergo CMR due to an already implanted ICD.

In contrast, recent advances in cardiac computed tomography (CT) technology now enable the assessment of cardiac anatomy with extremely high spatial resolution. We hypothesized that such resolution could be of value in assessing the heterogeneity of myocardial thickness in chronic healed infarcts. Based on our clinical practice, we indeed believe that detecting VT isthmuses relies on detecting thin residual layers of muscle cells-rich tissue inside infarct scars that CMR fails to identify due to partial volume effect. CT image acquisistion is less operator-dependent than CMR, making it a first class imaging modality for automated and reproductible processing pipelines. CT presents fewer contraindication than CMR (it is notably feasible in patients with ICD), it costs less, and its availability is superior.

The aim of this study was to assess the relationship between wall thickness heterogeneity, as assessed by CT, and ventricular tachycardia mechanisms in chronic myocardial infarction, using a computational approach (see the overall pipeline in fig. 5). This manuscript presents the first steps of this pipeline, in order to evaluate the relationship between simulations based on wall thickness and electro-anatomical mapping data.

2.1.2 Image Acquisition and Processing

2.1.2.1 Population

The data we used come from 7 patients (age 58 \(\pm\) 7 years, 1 woman) referred for catheter ablation therapy in the context of post-infarction ventricular tachycardia. The protocol of this study was approved by the local research ethics committee.

2.1.2.2 Acquisition

All the patients underwent contrast-enhanced ECG-gated cardiac multi-detector CT (MDCT) using a 64-slice clinical scanner (SOMATOM Definition, Siemens Medical Systems, Forchheim, Germany) between 1 to 3 days prior to the electrophysiological study. This imaging study was performed as part of standard care as there is an indication to undergo cardiac MDCT before electrophysiological procedures to rule out intra cardiac thrombi. Coronary angiographic images were acquired during the injection of a 120 ml bolus of iomeprol 400 mg/ml (Bracco, Milan, Italy) at a rate of 4 ml/s. Radiation exposure was typically between 2 and 4 mSv. Images were acquired in supine position, with tube current modulation set to end-diastole. The resulting voxels have a dimension of \(0.4\times{}0.4\times{}1\) mm3.

2.1.2.3 Segmentation

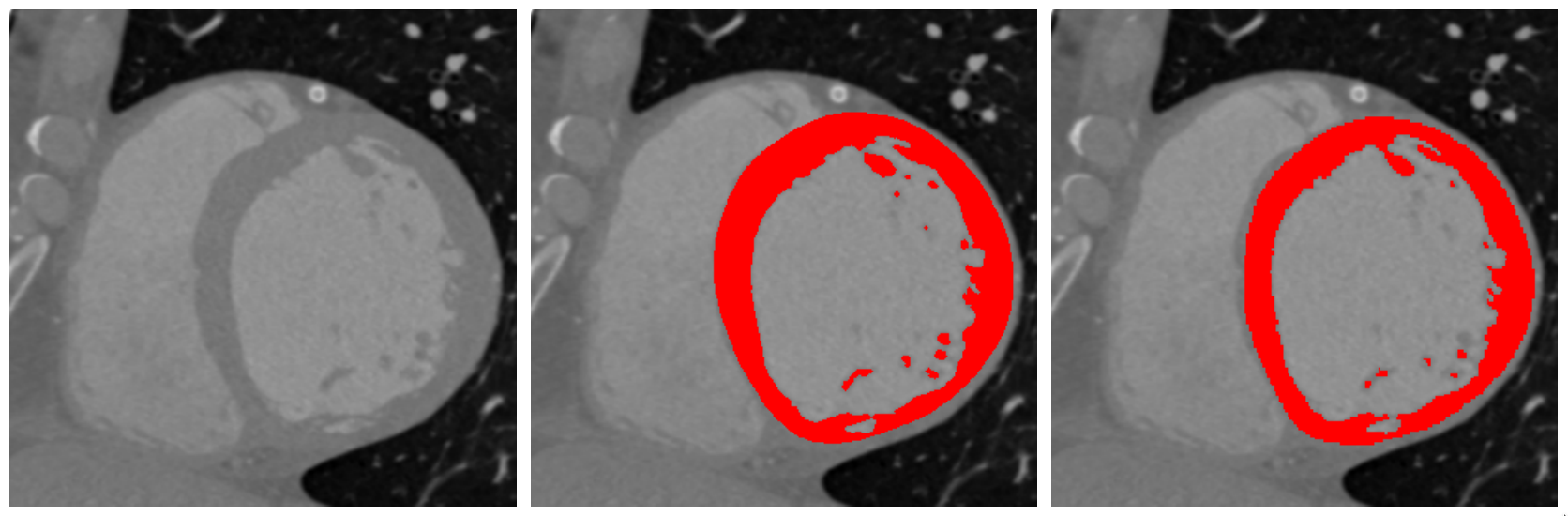

The left ventricular endocardium was automatically segmented using a region-growing algorithm, the thresholds to discriminate between the blood pool and the wall being optimized from a prior analysis of blood and wall densities. The epicardium was segmented using a semi-automated tool based on the interpolation of manually-drawn polygons. All analyses were performed using the MUSIC software (IHU Liryc Bordeaux, Inria Sophia Antipolis, France). An example of the result of such segmentation can be seen in fig. 6.

2.1.2.4 Wall Thickness Computation

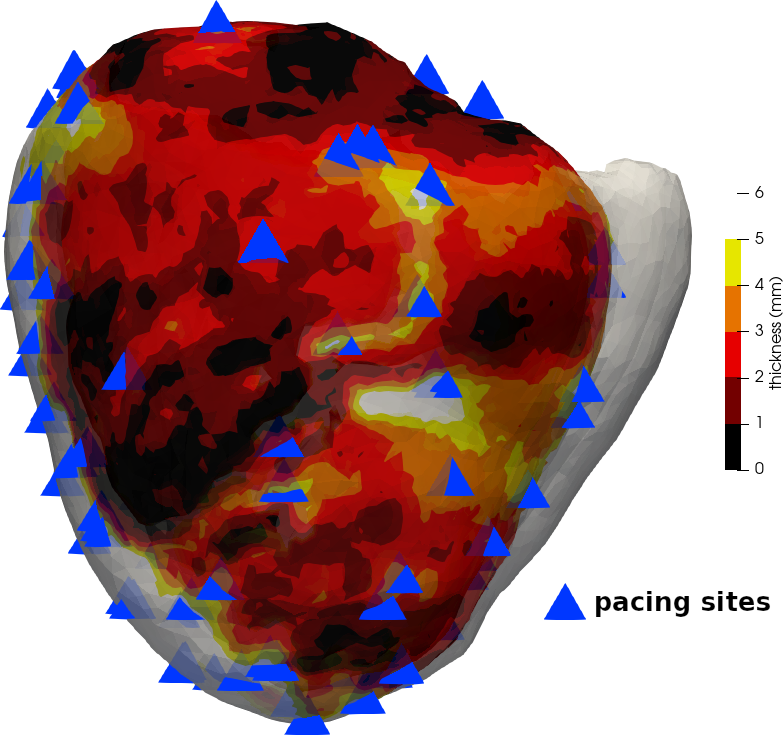

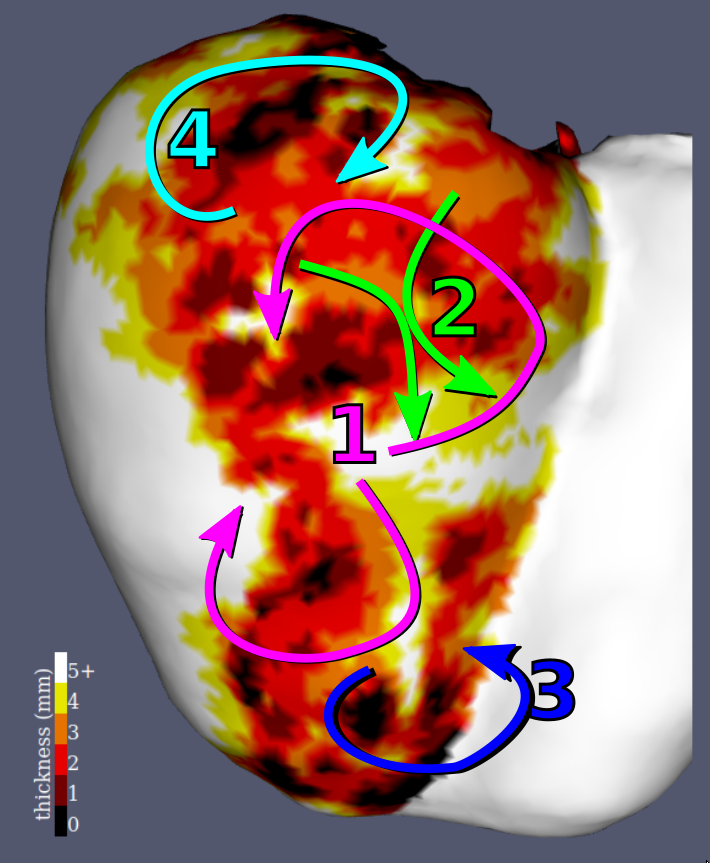

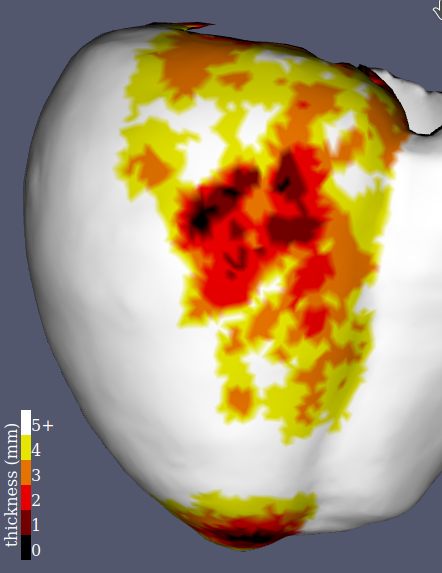

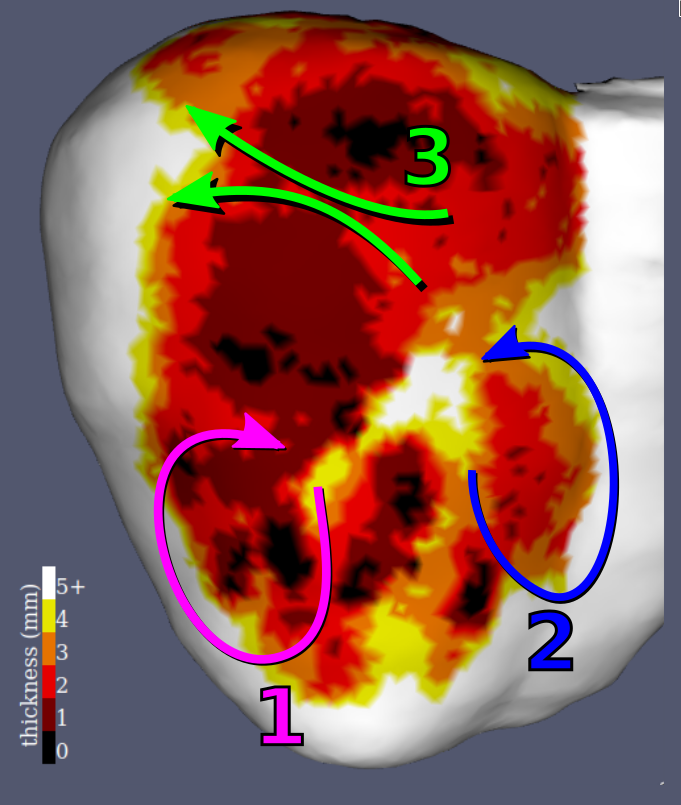

In CT-scan images, only the healthy part of the myocardium is visible, hence wall thinning is a good marker of scar localization and abnormal electro-physiological parameters (Komatsu et al. 2013). To accurately compute wall thickness, and to overcome difficulties related to its definition on tri-dimensional images, we chose the Yezzi et al. method (Yezzi and Prince 2003). It is based on solving the Laplace equation with Dirichlet boundary conditions to determine the trajectories along which the thickness will be computed. One advantage of such thickness definition is that it is then defined for every voxel, which is later useful in our model (see section 2.1.3.2). An example of the results can be observed in fig. 7 (left and middle), along with a comparison with the much lower level of detail in scar morphology assessment that is obtained with a voltage map from a sinus rhythm recording.

2.1.3 Cardiac Electrophysiology Modelling

2.1.3.1 Eikonal Model

We chose the Eikonal model for simulating the wave front propagation because of the following reasons:

It requires very few parameters compared with biophysical models, making it more suitable for patient-specific personalization in a clinical setting.

Its output is an activation map directly comparable with the clinical data.

It allows for very fast solving thanks to the fast marching algorithm.

We used the standard Eikonal formulation in this study:

\[v(X)||\nabla T(X)|| = 1\](2)

The sole parameter required besides the myocardial geometry is the wave front propagation speed \(v(X)\) (see section 2.1.3.2). More sophisticated biophysical models allow for more precise results; however, this precision relies on an accurate estimate of the parameters and the necessary data to such ends are not available in clinical practice.

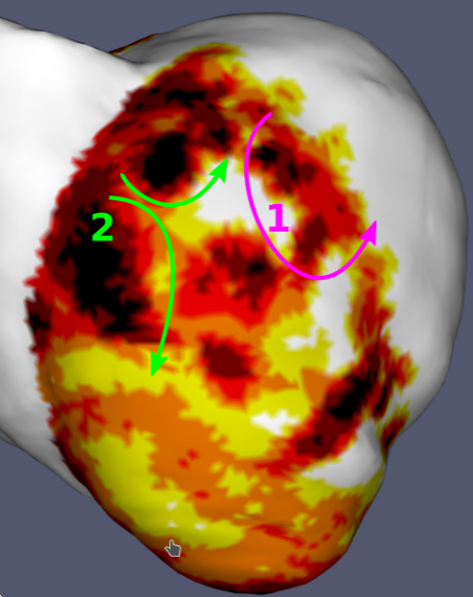

This original approach enabled us to parametrize directly the model on the basis of the thickness computed from the images. Additionally, we generated a unidirectional onset by creating an artificial conduction block. This block was needed to represent the refractoriness of the previously activated tissue that the Eikonal model does not include. An illustration of this phenomenon can be observed in the green box in fig. 8.

2.1.3.2 Wave Front Propagation Speed Estimation

As there is a link between myocardial wall thickness and its viability, it was possible to parameterize our model from the previous wall thickness computation (see section 2.1.2.4). There are basically three different cases:

Healthy myocardium where the wave front propagation speed is normal.

Dense fibrotic scar where the wave front propagation is extremely slow.

Gray zone area where it is somewhere in between.

Instead of arbitrarily choosing a specific speed for the “gray zone,” we exploited the resolution offered by our images to come up with a smooth, continuous estimate of the speed between healthy and scar myocardium. This resulted in the following logistic transfer function:

\[v(X) = \frac{v_{max}}{1 + e^{r(p -t(X))}}\](3)

where \(v_{max}\) is the maximum wave front propagation speed, \(t(X)\) the thickness at voxel \(X\), \(p\) the inflection point of the sigmoidal function, i.e., the thickness at which we reach \(\frac{1}{2}v_{max}\) and \(r\) a dimensionless parameter defining the steepness of the transfer function.

More specifically, we chose the following parameters:

\(v_{max} = 0.6\) m/s, as it is the conduction speed of healthy myocardium,

\(p = 3\) mm, as it was considered the gray zone “center,”

\(r = 2\), in order to obtain virtually null speed in areas where thickness is below 2 mm.

The resulting transfer function can be visualized in fig. 8.

The fibre orientations were not included in our simulations. They might be in our final pipeline, but it is worth noting that we obtained satisfying results without this parameter.

2.1.4 Electrophysiological Data

In order to evaluate the simulation results, we compared them to electro-anatomical mapping data. As part of the clinical management of their arrhythmia, all the patients underwent an electrophysiological procedure using a 3-dimensional electro-anatomical mapping system (Rhythmia, Boston scientific, USA) and a basket catheter (Orion, Boston scientific, USA) dedicated to high-density mapping. During the procedure, ventricular tachycardia was induced using a dedicated programmed stimulation protocol, and the arrhythmia could be mapped at extremely high density (about 10 000 points per map). Patients were then treated by catheter ablation targeting the critical isthmus of the recorded tachycardia, as well as potential other targets identified either by pace mapping or sinus rhythm substrate mapping. These maps were manually registered to the CT images.

2.1.5 Results

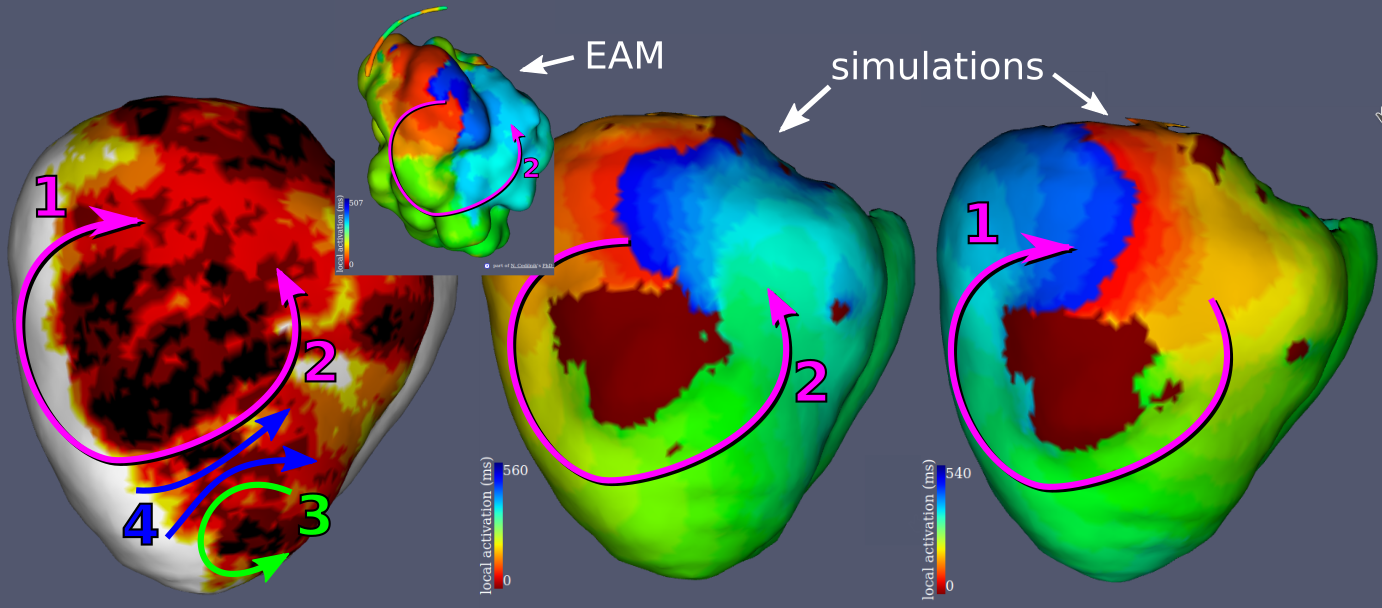

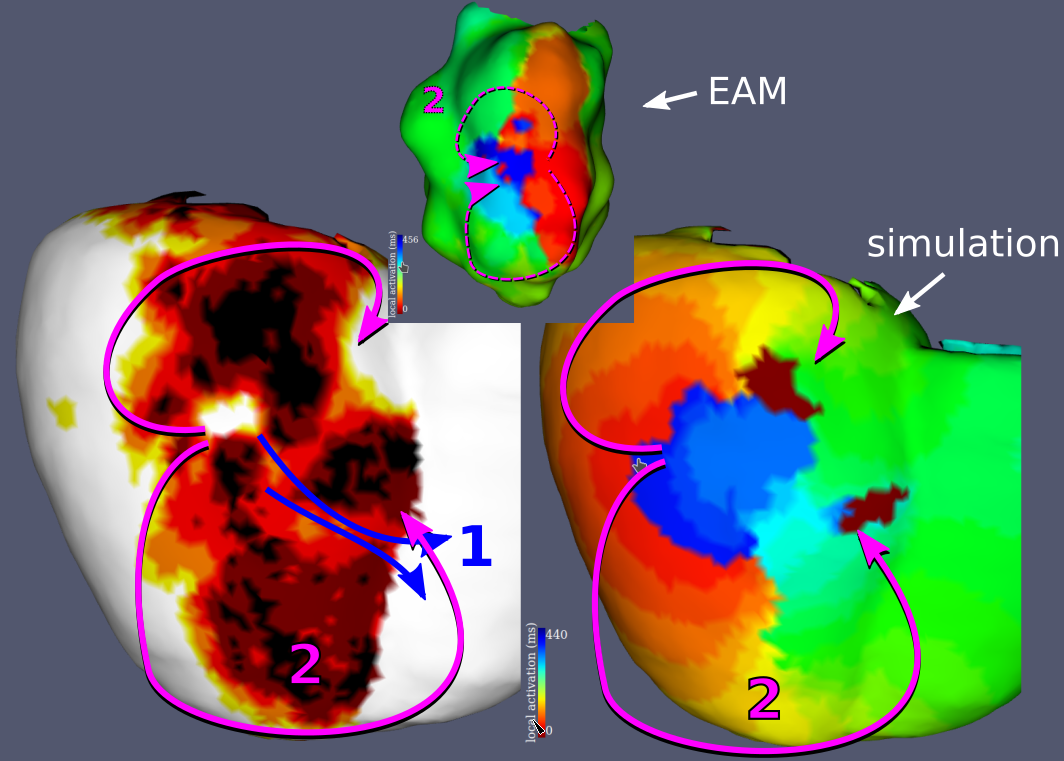

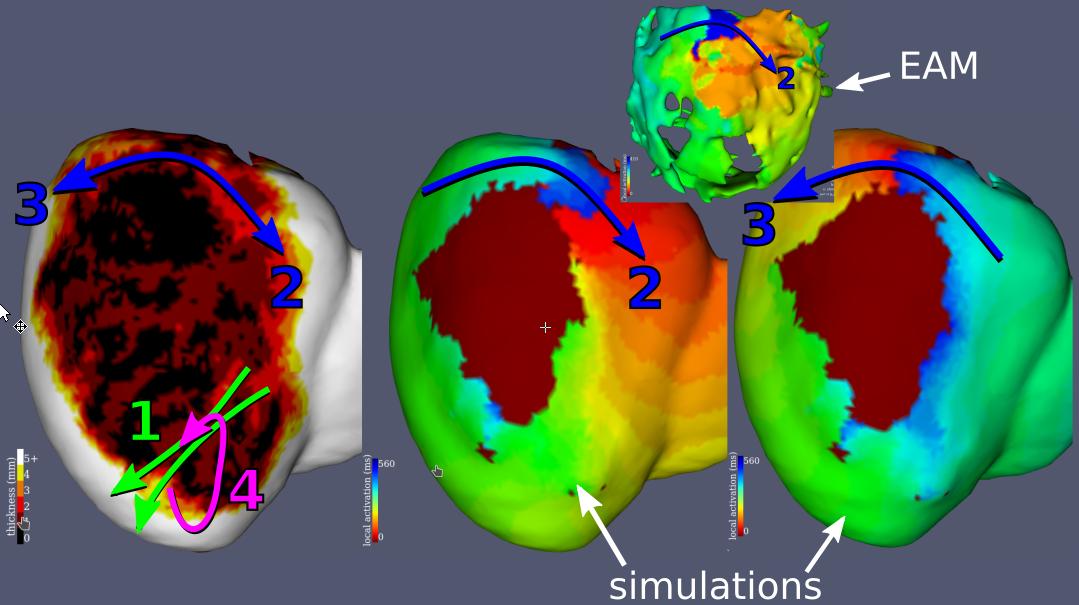

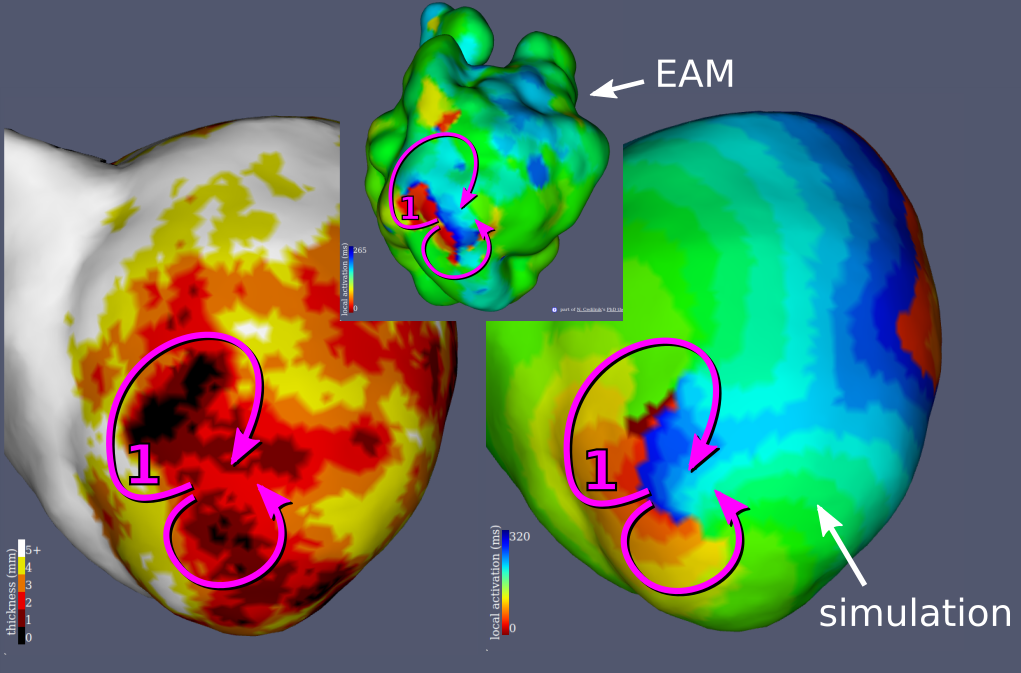

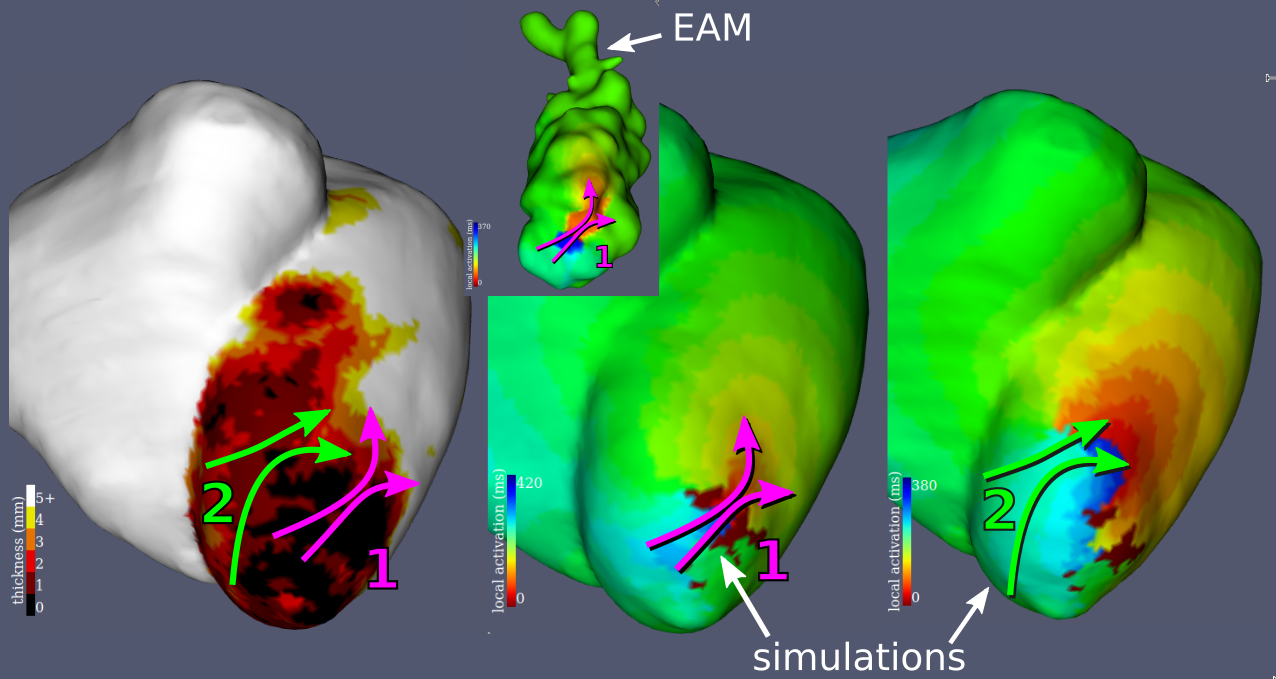

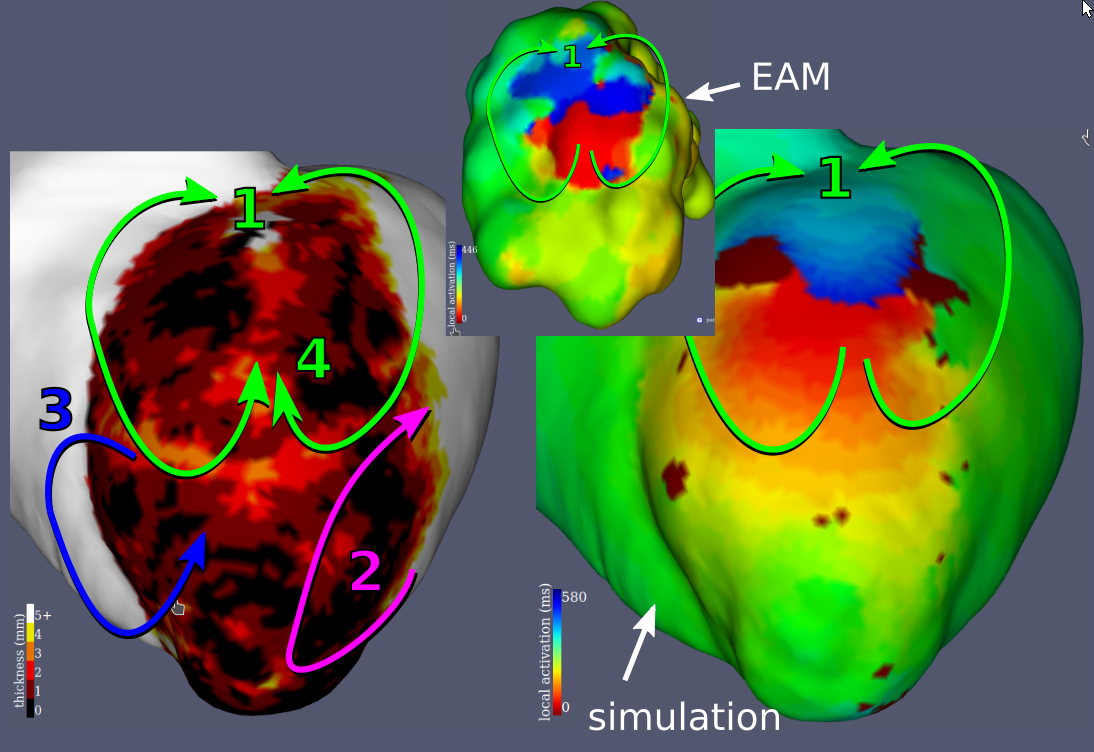

Examples results of the simulations and their comparisons to mapping data are presented in fig. 9. In each case, a qualitatively similar re-entry circuit is predicted.

Similar visual results were reached for all the VTs, without any further tuning of the model. However, to reach such results we needed to pick the stimulations points and directions very carefully.

2.1.6 Implementation

All the activation maps acquired during ventricular tachycardia (10 maps of 10 different circuits acquired in 7 patients) were exported to Matlab software (Mathworks, USA).

The simulation was computed directly on the voxel data as the resolution of our images represents highly detailed anatomy, and to avoid arbitrary choices inherent to mesh construction. We then mapped the results to a mesh but for visualization purposes only.

We used a custom Python package for the thickness computation and the SimpleITK (Yaniv et al. 2018) fast marching implementation to solve the Eikonal equation.

The artificial conduction block was created by setting the wave front propagation speed to zero in a parametrically defined disk of 15 mm radius, 2 mm behind the stimulation points, orthogonal to the desired stimulation direction.

From the results of the semi-automatic CT image segmentation, the complete simulation pipeline, with an informal benchmark realized on a i7-5500U CPU at 2.40GHz (using only 1 core), looks as follows:

Compute thickness from masks [43s]

Apply transfer function [0.5s]

Select pacing site and create refractory block [7s]

Solve the Eikonal equation [0.5s]2.1.7 Discussion

This article presents a framework that may be suitable for VT RF ablation targets and prediction of VT risk in daily clinical practice. The results presented here are preliminary but promising, due to the robustness of the image processing, the fast simulation of the model, and the high-resolution of CT scan images. This resolution was crucial in the characterization of the VT isthmuses presented in this study.

The main limitations of the results presented here are the limited sample size and the lack of quantitative evaluation of the simulation results. It is however worth noting that we were able to obtain such results without advanced calibration of the model’s parameters. For the latter, we plan to map the electrophysiological data onto the image-based meshes for more quantitative comparison. However it remains challenging due to the shape differences. Heterogeneity in CT images also induce a bias in thickness computation that may require patient-specific personnalization of the transfer function parameters.

We believe that in order to fully automatize the pipeline, we need not only to determine a ridge detection strategy, but also to overcome some of the simplifications induced by the Eikonal modelling choice and enhance the circuit characterization.

Cedilnik, N., Duchateau, J., Dubois, R., Sacher, F., Jaïs, P., Cochet, H., and Sermesant, M. EP-Europace europace2018

2.2 Going further with VT scan

2.2.1 Background

Personalised, i.e., patient-specific, simulation of the heart electrical behaviour is a dynamic research field (Gray and Pathmanathan 2018). Building such simulation is often a challenge as mathematical models can be computationally costly and clinical data is sparse and noisy. Most of the work in this area relies on extracting fibrosis from late-enhancement cardiac magnetic resonance imaging (MRI) (Lopez-Perez, Sebastian, and Ferrero 2015). In a post-infarct context, this geometry is then classically divided into 3 different zones based on MRI signal processing: the healthy myocardium, the dense scar and a grey zone where fibrosis and remaining functional cardiomyocytes coexist. Grouped together, these three zones represent a three-dimensional domain upon which a set of partial differential equations (PDE) is solved. The model parameters are then chosen differently according to the different zones of the domain to represent the different electrophysiological properties of these tissues. Models typically used are reaction-diffusion models (Chen et al. 2016), where the reaction part mimics cell-level changes in membrane potential and the diffusion part reflects the ability of cardiac action potential to propagate through specialised structures. Adequately parameterising such models is a tedious task; solving takes a lot of computational power and time, making them hard to enter medical practice in a clinically compatible time frame.

Additionally, before the actual simulation computation, the binary masks (outputs from image segmentation) often need to be transformed into another three-dimensional representation, a 3D mesh, more suited to finite elements PDE solvers (Lopez-Perez, Sebastian, and Ferrero 2015). Choosing adequate parameters for the mesh generation step is critical in order to reach reasonable numerical accuracy and computational time. It requires specific expertise rarely available on day-to-day clinical practice.

Some authors have reported realistic intra-cardiac electrograms simulation (Rocío Cabrera-Lozoya et al. 2016), but the output of such models are usually visual simulations of the wave front propagation in the cardiac tissue, i.e. activation maps (Chen et al. 2016). Either way, the goal of such simulations is always to identify abnormal activation patterns linked to fatal arrhythmias. They could improve sudden cardiac death (SCD) risk prediction (H. J. Arevalo et al. 2016), a major challenge in cardiology (Hill et al. 2016).

They can also be used to improve post infarct re-entrant ventricular tachycardia (VT) radio-frequency ablation (RFA) outcome by contributing to the planning phase of the intervention (Rocío Cabrera-Lozoya et al. 2016). In these interventions, the VT is usually induced and mapped (when hemodynamically tolerated) allowing the clinician to identify re-entry isthmus(es) to be ablated. This risky and long target identification phase still drastically limits the wide spread and success rate of such interventions. Moreover, most of these patients already carry an implantable cardioverter-defibrillator (ICD), which makes model personalisation from MRI difficult if not impossible.

It has been reported that cardiac computed tomography (CT) images could be useful for VT RFA (Mahida et al. 2017). CT offers better cost, accessibility, inter-centre and inter-personal reproducibility than MRI. Most notably CTs are not a problem in ICD carriers in whom the image quality and spatial resolution are preserved unlike with MRI. This is important as a majority of scar related VT ablations are conducted in ICD carriers. The better resolution of CT images could also be critical in appreciating scar heterogeneity and thus identifying potential targets.

On CT images, the infarct scar is characterised by an apparent thinning of the myocardial wall (Mahida et al. 2017), which has been shown to correlate with low voltage areas (Ghannam et al. 2018). Some VT re-entry isthmuses are also visible on such images, with a characteristic morphology on myocardial wall thickness maps. It has indeed been recently shown that they appear as a zone of moderate thinning bordered by more intense thinning and joining two areas of normal thickness (Ghannam et al. 2018).

In this paper we propose a novel framework to simulate activation maps from CT images. It uses CT myocardial wall thickness to parameterise a fast organ-level wave front propagation model in a pipeline visually represented in fig. 10.

2.2.2 Methods

2.2.2.1 Cardiac Computed Tomography Images

Images were acquired using a contrast-enhanced ECG-gated cardiac multi-detector CT using a 64-slice clinical scanner (SOMATOM Definition, Siemens Medical Systems, Forchheim, Germany). Coronary angiography images were acquired during the injection of a 120 ml bolus of iomeprol 400 mg/ml (Bracco, Milan, Italy) at a rate of 4 ml/s. Radiation exposure was typically between 2 and 4 mSv. Images were acquired in supine position, with tube current modulation set to end-diastole. The resulting voxels have a dimension of 0.4×0.4×1 mm³, superior to what clinically available MRIs can produce. A resulting short-axis slice can be visualised on fig. 10 (left part).

2.2.2.2 Wall Segmentation

On these images, the left ventricle was segmented as follows.

The endocardial mask was segmented using a region-growing algorithm, the thresholds to discriminate between the blood pool and the wall being optimised from a prior analysis of blood and wall densities.

The epicardial mask was segmented using a semi-automated tool built within the MUSIC software (IHU Liryc Bordeaux, Inria Sophia Antipolis, France): splines were manually drawn on a few slices and interpolated in-between.

The resulting myocardial wall mask (epicardial mask minus the endocardial mask) has around one million voxels.

2.2.2.3 Wall Thickness Estimation

On such images, chronic infarct scars appear as a thinning of the myocardial wall (Mahida et al. 2017). To localise scars, instead of successive dilations of the endocardial masks that were used in other studies (Ghannam et al. 2018), we opted for a fully automated and continuous method (Yezzi and Prince 2003). Estimating the thickness of a structure from 3D medical images is not trivial. The accuracy can be compromised depending on how the two surfaces are described, and how the distance between them is computed. In this work, we chose to rely on a robust method to compute the shortest path between the endocardium and the epicardium, and then integrate the distance along this path. This is based on solving a partial differential equation to compute a thickness value at every voxel of the wall mask (Yezzi and Prince 2003). Example results of such thickness maps are presented on fig. 15.

2.2.2.4 Eikonal Model

Numerous models were proposed in the literature to simulate cardiac action potential dynamics. In order to obtain a fast and robust pipeline, we used the Eikonal model of wave front propagation that directly outputs an activation map. It is very robust to changes in image resolution and it can be computed very efficiently with the fast-marching algorithm.

Model inputs are:

The myocardial wall mask, i.e., the domain of resolution.

For every voxels of this mask, a local conduction velocity.

One or more onset point(s), to initiate propagation.

(Optionally) An artificial unidirectional block, in order to orientate the initial propagation, used to reproduce re-entrant VT patterns.

Let \(v\) be the local conduction velocity, \(T\) the local activation time and \(x\) a voxel of the wall mask, the formal expression of this model is the following:

\[v(x) \parallel \nabla T(x) \parallel = 1\]

To solve this equation we used the fast marching algorithm implementation in the Insight Segmentation and Registration Toolkit (ITK, Kitware, USA). It did not require any meshing step as this implementation directly takes advantage of the regular grid of CT 3D images.

2.2.2.5 Model parameters

Due to a “zig-zag” course of activation in surviving bundles existing in the infarcted tissue, the wave front propagates very slowly and finally exits the scar (De Bakker et al. 1993). We hypothesised that the wave front propagation velocity could be estimated from the wall thickness using a transfer function that would have the following features:

It should reach a plateau, \(v_{\max}\), above physiological wall thicknesses.

It should be virtually null, \(v_{\min}\), below certain thicknesses, where almost no conducting cells remain.

Let \(v\) be the velocity at each voxel \(x\) of the domain, \(W\) the thickness, \(p\) the mid-point between “pure scar” and “healthy” thicknesses and \(r\) a parameter influencing the slope of the transition between \(v_{\min}\) and \(v_{\max}\). We designed the following continuous transfer function from wall thickness to wave front propagation fig. 12:

\[v(x) = \frac{v_{\max} - v_{\min}}{1 + e^{r(p - W(x))}} + v_{\min}\]

The fast-marching solver needs a “stopping value,” i.e., a maximum wave front reaching time. In our experiments, we set it to 500 ms and also assigned this value to non-activated parts of the domain.

In order to model a re-entrant VT pattern, we added an artificial “refractory wall,” as previously described in (Cedilnik et al. 2017). A conduction block orthogonal to the isthmus longitudinal axis was added a few voxels behind the simulation onset point. It allows to model tissue refractoriness while staying in the simple Eikonal model framework, allowing fast computations and keeping the computational cost very low. This is only needed to reproduce VT activation maps, not for activation maps from controlled pacing.

2.2.2.6 Automatic ridge detection

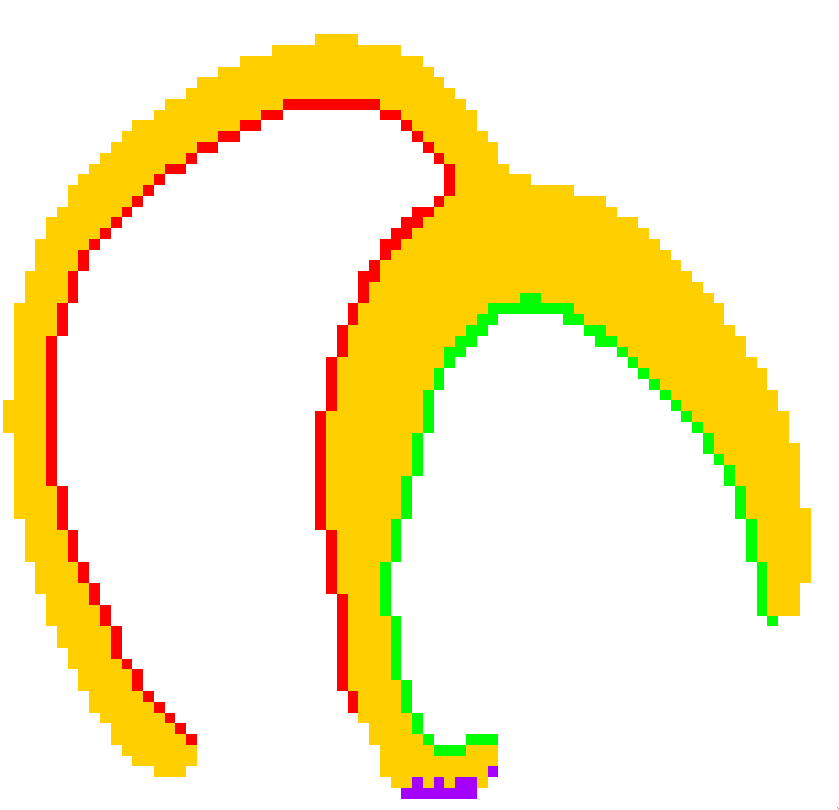

In order to detect VT isthmuses based on image criteria alone, we used the objectness filter (Antiga 2007), implementation of the Insight Toolkit (Kitware, USA). It is a generalisation of the Hessian matrix-based vessel detection method described by Frangi (Frangi et al. 1998). We fed this algorithm with a modified version of the thickness map where only potential isthmuses candidates (Ghannam et al. 2018), i.e., moderately thin zones between 2 and 5 mm thickness, remained. We tuned the filter’s parameter to respect the topological properties known to characterise VT isthmuses on CT images (Ghannam et al. 2018). We named the output maps obtained this way channelness maps.

2.2.2.7 Comparison with recorded activation maps

In order to gain insight on our model relevance and limitations, we compared our simulation to activation maps acquired during RFA interventions. These activation maps were produced by the Rhythmia mapping system (Boston Scientific, USA) using a 64-electrodes Orion Catheter. They were recorded during sinus rhythm, controlled pacing and VT. They come in the form of surface triangular meshes in different reference frames than the corresponding CT images.

In order to compare both visually (qualitatively) and numerically (quantitatively) the outputs of our simulations to the reference data, we took advantage of the correlation between low-voltages zones and CT wall thinning areas (Ghannam et al. 2018) to manually align the two geometries. After fitting an ellipsoid to the point cloud and using the ellipsoid’s centre, we defined each point by spherical coordinates. A visual representation of this registration framework is presented on fig. 13. Finally, it was possible to project the data from the EP studies onto the more accurate geometry extracted from the CT images.

Using the ellipsoid long axis and a manually chosen point on the septum, this method also allows the fast and automated generation of bull’s eye plots.

2.2.3 Results

2.2.3.1 Execution time

The semi-automated segmentation of the left ventricle takes around 10 minutes for an experienced user of the MUSIC software. The thickness computation takes about 1 minute, depending on the number of voxels of the wall mask. The actual simulation takes less than 1 minute. The channelness computation is the longest step, around 2 to 4 minutes. This informal benchmark results were measured on an Intel i7-5500U-powered laptop.

2.2.3.2 Automated ridge detection

On all channelness maps, an expert cardiologist and an expert radiologist visually assessed that ridges corresponding to their critical isthmus definition could be detected using the channelness filter.

2.2.3.3 Simulated activation maps

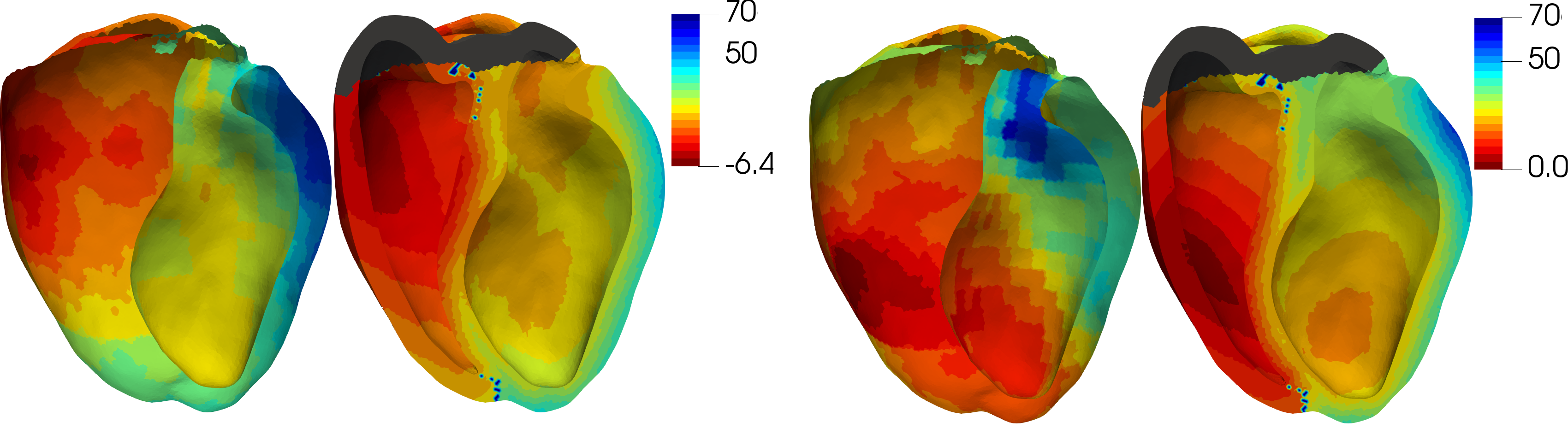

For most cases, the general activation patterns of the simulations obtained using our framework match the recordings when using velocities and thicknesses thresholds found in the literature. As can be seen on fig. 14, scar late activation is visible both on simulations and on controlled pacing. The characteristic figure of eight activation patterns of re-entrant VT can also be accurately reproduced using our framework, when using a refractory wall to give the propagation an initial direction. Video versions are available as supplementary materials to offer a better appreciation of the similar dynamics of wave front propagation between the simulations and the measurements. This is actually the usual way recorded activation maps are visualised by the interventional cardiologist in order to decide ablation targets.

2.2.4 Discussion

2.2.4.1 Model limitations

We voluntarily opted for the simplest cardiac EP model in order to build a fast pipeline. For instance, most of the related work includes an algorithmic fibre orientation estimation that we did not include. We do not believe that it would improve much the results, as fibre orientation and its influence are still hard to estimate, particularly in infarcted zones.

In contrast, we believe that an important phenomenon that our model does not address is the front curvature impact. A straight wave front is known to propagate faster than a curved wave front, but this is not the case in our simulations. In some cases, this results in fast, unrealistic, “U-turns” of the excitation. This could be improved with extensions of the Eikonal model (Chinchapatnam et al. 2008), or by switching to a reaction-diffusion model parameterised on wall thickness. The main challenge will here be to preserve a clinically compatible framework in terms of parameterisation complexity and required computational time.

2.2.4.2 Velocities

Our model probably simplifies activation patterns inside the VT isthmuses by globally slowing down the propagation in them. A recent animal study (Anter et al. 2016) indeed suggests that the depolarisation slows down when entering and exiting the isthmus but propagates normally in between. The wave front would then be slowed down inside the core of the isthmus. However, we think that our global slowing down in the whole isthmus is a relevant averaging simplification at the organ scale. In the case where our further work would show the pro-eminence of this phenomenon, an option would be to use channelness maps to accordingly change velocities in isthmuses.

2.2.4.3 Thickness thresholds

Based on data from a recent study (Ghannam et al. 2018), we defined global thickness thresholds across all patients to discriminate between healthy and scar zones. Specifically, we set them to below 2 mm for “pure scar” and above 5 mm for healthy myocardium. However, in some VT cases, the wave front propagation crossed scar zones too fast and matching the recording proved more challenging.

We noticed that in those cases, for instance the one shown in fig. 16, the simulation output could be drastically improved by shifting these thresholds to higher values, i.e., by raising the \(p\) parameter of our thickness to velocity transfer function. Using patient-specific thresholds both for thickness maps analysis and model parameterisation could improve the use of CT images in the context of VT RFA. These patient-specific thresholds could possibly be determined by the disease history or image features such as the distribution of thickness for a specific myocardial wall.

2.2.4.4 Parameter optimisation

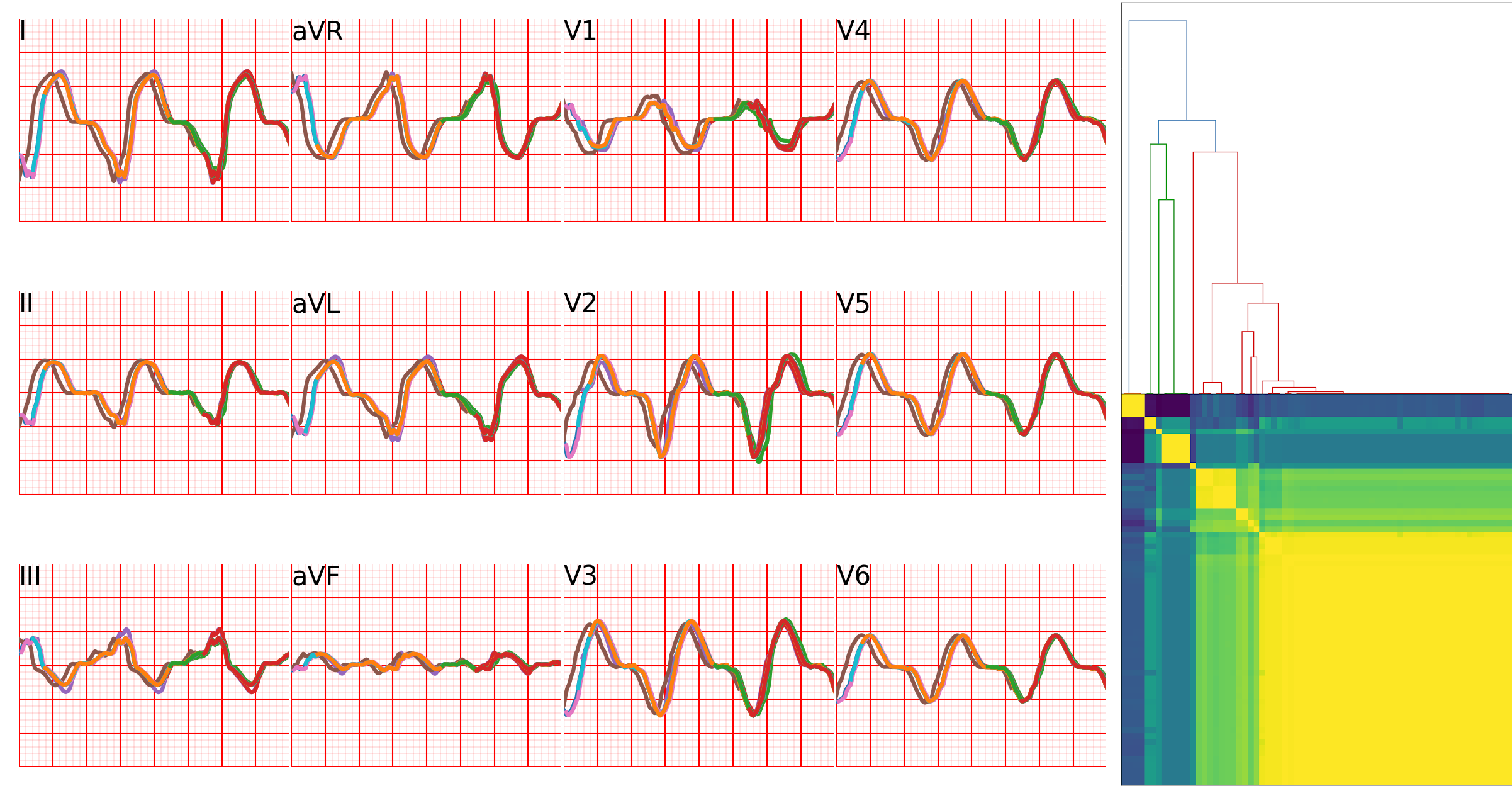

Using the registration of image and EP data in the common frame of reference described in the Methods section, it was possible to define a metric to quantify differences between simulations and ground truths. Using the mean square difference between these data, we therefore optimised the thickness to velocity transfer function parameters (\(v_{\max}\), \(v_{\min}\), \(p\) and \(r\), see fig. 12, to minimise the discrepancy between simulations and recordings. To this end, we used the covariance matrix-evolution strategy algorithm (Hansen, Müller, and Koumoutsakos 2003), suited for this type of non-convex optimisation problem. While minimising the mean square difference did improve the numerical matching of the simulations to the recording, the overall activation pattern actually was often degraded. This is partly due to noise in the target EP data and to the fact that the mean square difference metric may not be adapted to preserving the activation patterns like the typical figure of eight shape of re-entrant VT. How to define this pattern-aware metric is still an open question. The output of the channelness is also highly depending on setting the right parameters for the objectness mapping, including a channelness threshold. These parameters should be optimised and validated on a larger multi-centric database.

2.2.4.5 Conclusion: a clinically compatible modelling framework

The simulation framework presented in this article may be useful for EP modelling to enter clinical practice. It requires little human interaction and expertise to go from imaging data to EP simulations. It is very fast and would even be faster by developing implementations more suited to such purpose than the generic ones we currently use. The availability and reproducibility offered by CT images and such automation could help personalised cardiac modelling to enter clinical applications.

2.2.5 Additional material

Cedilnik, N., Duchateau, J., Sacher, F., Jaïs, P., Cochet, H., and Sermesant, M. Functional Imaging and Modeling of the Heart fimh2019

2.3 Automating the segmentation process

2.3.1 Introduction

Catheter radiofrequency ablation of the ischemic arrhythmogenic substrate has been shown to be efficient to prevent sudden cardiac deaths . This efficiency can partially be attributed to the integration of information extracted from cardiac imaging data both prior to and during the intervention (Yamashita et al. 2016).

While cardiac magnetic resonance imaging is still widely considered the gold standard for ischemic scar assessment and model personalisation (Prakosa et al. 2018), computed tomography (CT) has recently gained interest in the electrophysiological (EP) community. Cardiac CT is indeed able to locate and evaluate the ischemic scar heterogeneity (Mahida et al. 2017). This is possible by identifying zones of myocardial wall thinning and evaluating the severity of this thinning; this approach is even able to predict abnormal electrical activity (Yamashita et al. 2017; Ghannam et al. 2018). Moreover, CT is less affected than MRI by the presence of a implantable cardioverter de- fibrillator, a device commonly found in patients susceptible to undergo catheter ablation of ventricular tachycardia.

Given these scar characteristics on CT images, it is no surprise that CT has been successfully used as a way to personalise EP models (Cedilnik et al. 2018). However, up until now, this personalisation relies on manual or semi-automated segmentation of the left ventricular (LV) wall, despite the availability of efficient three-dimensional medical image automated segmentation methods (Isensee et al. 2018).

In this paper we evaluate the impact of a deep learning automated segmentation approach on CT ischemic scar assessment and the related model personalisation framework. This framework (fig. 17) takes a cardiac CT image as input. The LV is automatically segmented using a neural network, allowing myocardial thickness to be automatically computed (Yezzi and Prince 2003). After choosing a virtual pacing point, activation maps can be simulated using the Eikonal model. Finally, electrograms can be generated through a novel approach presented in section 2.3.2.4.

2.3.2 Methods

2.3.2.1 Deep Learning Segmentation

The deep learning approach we applied is based on a previously described methodology to segment the left atrium (Jia et al. 2018). It relies on the use of two successive specialised U-nets (Isensee et al. 2018). The first is used to coarsely segment the full original CT image. Its output is used to compute the bounding box of the region of interest. This allows a cropping of the original image for a higher resolution segmentation of the desired structures.

2.3.2.1.1 Database and Training

The network was trained from scratch using a database of 500 cardiac manual segmentations of contrast-enhanced CT images. These segmentations comprise the LV endocardium, the LV epicardium and the right ventricular epicardium. We used 450 cases for training per se and 50 cases for validation with a loss function defined as the opposite of a label-wise Dice score. The model was fitted using an nVidia GeForce 1080 Ti provided by the NEF computing platform.

2.3.2.1.2 “Low Resolution” U-Net

The first network’s input is the original CT image resampled to \(128\times 128 \times 128\) voxels and it outputs 3 ventricular masks: 2 for the left ventricle (epicardium and endocardium), 1 epicardial right ventricle. The training data was augmented twice, by 2 random rotation of the original image along each axis in the \(\left[-\frac{\pi}{8}; \frac{\pi}{8} \right]\) interval.

2.3.2.1.3 “High Resolution” U-Net

The second network was trained using cropped CT images around the LV, with 5 mm margins and resampled to \(144\times144\times144\) voxels. Each original image was augmented 20 times: by random rotations in the \(\left[-\frac{\pi}{7}; \frac{\pi}{7} \right]\) range along each axis, a random shearing in the \([-0.1; 0.1]\) range along each axis and the application of Gaussian blur with a kernel using a random standard deviation picked in the \([0.5; 2]\) range for half of the augmentations. The network outputs are the two left ventricular masks.

2.3.2.1.4 Post-processing

The network’s outputs were thresholded at 0.5. In order to obtain spatially coherent masks, they were filtered to keep only the largest connected component and to forbid overlap between masks. Remaining holes in the masks were filled using the most frequent label in the hole neighbourhood. The masks were finally up-sampled to the original CT image resolution using a nearest neighbour interpolation.

2.3.2.2 Thickness Computation

Smooth and robust LV wall thickness estimation is not trivial, especially in three dimensions. It indeed requires to solve a partial differential equation using the endocardium and epicardum masks (Yezzi and Prince 2003). Such approach, which assign a thickness value to each voxel of the LV wall mask, is particularly adapted for simulations on regular grids; it has been previously used to such ends (Cedilnik et al. 2018).

2.3.2.3 Electrophysiological Model

We used the thickness information to parameterise an Eikonal model previously described in (Cedilnik et al. 2018). Briefly, wall thinning is related to a macroscopic slowing of the activation front, due to a microscopic zig-zag course of activation in the infarcted tissue . A random pacing point was chosen in the healthy tissue, defined by a wall thickness superior to 5 mm, to initiate the propagation. The simulation was stopped at 500 ms.

2.3.2.4 Electrogram Simulation with the Eikonal Model

We propose an efficient way to simulate electrograms with the Eikonal model. It couples the activation map with a transmembrane action potential model and a propagation methodology based on the dipole formulation (Giffard-Roisin, Fovargue, et al. 2016). In this framework, every voxel is a considered a dipole with local current density:

\[\mathbf{j}_{eq} = -\sigma \nabla{v},\](4)

where \(\sigma\) is the local conductivity and

\(\nabla{v}\) is the spatial gradient of the potential \(v\). Using the chain rule, we can rewrite eq. 4 as:

\[\mathbf{j}_{eq} = -\sigma \frac{\partial v}{\partial T} \times \frac{\partial T}{\partial X} = -\sigma \frac{\partial v}{\partial T} \nabla{T},\]

where \(\nabla T\) is the gradient of the activation map (output of the Eikonal model).

To compute \(\frac{\partial v}{\partial T}\), we used a forward Euler scheme to solve the Mitchell-Schaeffer cardiomyocite action potential model (Mitchell and Schaeffer 2003) and stored its time derivative. We matched the activation time obtained with this model to the activation time obtained with the Eikonal model. To simulate bipolar electrograms, we placed two virtual electrodes (distance between them: 0.9 mm) inside the heart cavity and subtracted one signal to the other.

In order to handle the activation map gradient values at the mask boundaries, we ignored the gradient component(s) in the direction(s) flowing out of the mask. We adapted the volume used in the dipole moment computation accordingly (Giffard-Roisin, Fovargue, et al. 2016).

2.3.2.5 Evaluation of the Automated Segmentation Impact

In order to evaluate the automated segmentation impact, we focused on 8 cardiac CT images (and their corresponding manual segmentation) of patients suffering of re-entrant VT. Theses images have been used neither for the automated segmentation training nor its validation. For 6 cases (patients 1-6) the original CT images were available; for the remaining 2 cases, resampled images aligned to the heart short axis were used.

Binary masks were resampled to the original CT image resolution when available, in order to compute a Dice score on the LV wall.

To compare thickness and activation maps, a mid wall mesh was generated from the manual wall segmentation. Maps corresponding to manual and automated segmentation were then projected on this common frame of reference. A point-wise comparison was made possible this way, and median differences were computed.

To compare the electrograms obtained with both segmentation methods, they were compared with a Pearson correlation coefficient \(r\).

2.3.3 Results

2.3.3.1 Segmentation

The training phase of the “high resolution” network reached a plateau at a Dice score of \(0.96\) for the LV wall. Data augmentation was key to reaching this high score, especially adding the blur filter.

The median Dice score of the 8 images after up-sampling was lower: \(0.90\). There is no notable difference in the segmentation quality when using original CT images versus short-axis resampled images.

In zones of extreme thinning, the wall continuity was not always observed.

2.3.3.2 Thickness Computation

Across all cases, the median thickness of the manually segmented walls was 5.8 \(\pm\) 3.2 mm versus 5.8 \(\pm\) 3.0 mm for the automatic segmentation. Very sim- ilar thickness maps were obtained using the automated segmentation (median differences across all cases: 0.7 mm, see fig. 19).

2.3.3.3 Eikonal Model

| Patient | DSC | HD (mm) | AHD (mm) | TD (mm) | LATD (ms) | EGr |

|---|---|---|---|---|---|---|

| 1 | 0.88 | 19.15 | 0.10 | 0.7 | 5 | 0.99 |

| 4 | 0.90 | 17.47 | 0.09 | 0.8 | 2 | 0.99 |

| 5 | 0.87 | 13.25 | 0.09 | 0.8 | 3 | 0.99 |

| 6 | 0.91 | 5.00 | 0.05 | 0.5 | 1 | 1.00 |

| 7 | 0.91 | 16.44 | 0.06 | 0.6 | 1 | 1.00 |

| 8 | 0.88 | 7.57 | 0.09 | 1.4 | 29 | 0.75 |

| 9 | 0.89 | 22.86 | 0.11 | 0.7 | 2 | 0.94 |

| 10 | 0.91 | 12.71 | 0.08 | 0.7 | 1 | 0.99 |

| median | 0.90 | 14.85 | 0.09 | 0.7 | 2 | 0.99 |

As expected, given similar thickness maps and geometries, the “virtual pacing” results were very close. The median activation time of the manual segmentation models was \(143\) ms, with a median difference with automated segmentation as little as \(2\) ms across all cases.

2.3.3.4 Electrogram Simulations

Bipolar signals generated with the automated and manual segmentations were virtually identical, with a median Pearson’s \(r\) correlation coefficient of 0.99. All \(r\)s were above 0.94 except one (patient 8, 0.72), and all had \(p\)-values below \(10^{-10}\).

2.3.4 Discussion

2.3.4.1 Segmentation

The automated segmentation algorithm was shown to produce segmentation very close to those obtained by trained radiologists, even with different orientations of the input images. A perfect match between the algorithm and expert segmentation is not desirable anyway as there is also uncertainty in the manual segmentation. The available automated segmentation can probably be improved by training the neural networks on GPUs with more RAM, allowing a better input resolution and less loss of information in the down-sampling phase.

2.3.4.2 Thickness

The differences in the corresponding thickness maps are even smaller. Scar heterogeneity is preserved and comparable between manual and automated segmentation. In some particular cases of wall configuration (holes within the wall…) the thickness computation could be problematic but it only happens in very localised cases which were easy to identify.

Ideally it would have been preferable to compare scar localization on MRI images, but they were not available for these patients.

2.3.4.3 Modelling

As expected, similarity of thickness maps leads to very similar simulation output between the automated and manual segmentation. The wall discontinuities are not problematic at all since they concern zones of extreme wall thinning that are considered non conductive anyway. These explain most of the discrepancy between the resulting activation maps.

2.3.5 Conclusion

We presented in this manuscript the automatic segmentation of the myocardial wall in CT images and the quantification of its impact on personalised models. We showed that most of the infarct related arrhythmia information extraction from CT images are not affected much by using an automated segmentation methodology, proving its robustness. This is another major step towards the future use of EP model personalisation in clinical practice. Furthermore, we presented a novel methodology to generate electrograms from activation maps using the Eikonal model and the dipole formulation. Here we used the same action potential characteristics across the whole domain, but our formulation makes it possible to easily vary them, using the LV wall thickness for instance. Filtering the signals obtained in the same way they are filtered in the EP lab could further improve their realism.

Cedilnik, N., and Sermesant, M. Statistical Atlases and Computational Models of the Heart stacom2019

2.4 Piggy-CRT

2.4.1 Introduction

For our participation in the STACOM piggyCRT challenge, we decided to use the Eikonal model of cardiac electrophysiology (EP). Using the fast marching method, simulations using this model are very fast to solve, which makes them both particularly suited to a clinical workflow (Cedilnik et al. 2018) and easy to personalise. Moreover, as we are only interested in local activation times, the Eikonal model is relevant.

We determined the optimal parameters for this model, i.e., the parameters that minimise the discrepancy between the recorded and the simulated pre-cardiac resynchronisation therapy (CRT) activation maps, for each pig. This model personalisation was then used to predict the post-CRT activation maps using the same parameters (except for the initialisation of the propagation).

2.4.2 Model personalization: general framework

2.4.2.1 Eikonal model

The Eikonal model of cardiac electrophysiology outputs an activation map, i.e., local activation times (LATs) and is defined as follows:

\[v \sqrt{\nabla T^{t} D \nabla T} = 1\](5)

where \(T\) is the local activation time, \(v\) is the local conduction velocity and \(D\) the anisotropic tensor to account for the fibre orientation. We experimented both with fibre orientations generated using the classic Streeter model and the provided OTRBM model.

To make it possible to use multiple onset locations with different delays, we ran one simulation \(T_i\) for each onset \(i\). We then added the desired onset delay \(d_i\) to the whole activation map and combined them into a final activation map by choosing the minimal LAT for each element \(X\) of the domain \(\Omega\):

\[T_{\text{final}} = \min_{\forall X \in \Omega} (T_1(X) + d_1, T_2(X) + d_2, ...)\](6)

Instead of solving the equation on the unstructured grid provided by the challenge, we decided to voxelise them, i.e., to define the domain on a regular lattice of 1 cubic millimetre resolution. Two reasons motivated this choice:

morphological information on an individual heart is generally obtained from the segmentation of imaging data, which is naturally of this form,

the fast marching method is faster on Cartesian grids.

As for the implementation, we used open-source fast marching routines available online (Mirebeau 2017).

2.4.2.2 Parameters fitting with CMA-ES

We used the covariance matrix adaptation - evolution strategy (CMA-ES) (Hansen, Akimoto, and Baudis 2019) genetic algorithm to fit our model parameters to the recorded EP maps. This approach has been used before for a similar challenge (Giffard-Roisin, Fovargue, et al. 2016), and is well suited for multi-parameters, non-convex optimization problems.

We chose to minimise the root median square difference between the recorded data and the simulation output. This choice is justified by the noise on the training data probably due to the acquisition itself and to its registration on the image-derived myocardial geometry. This could lead to outliers driving the root mean square error.

2.4.3 Velocities and domain division

Given this framework, the main parameter that we tried to personalise was the local conduction velocity. But what is the optimal domain decomposition to define the number of local parameters to estimate?

Using different velocities for each voxel would both be impractical (too many parameters to optimise) and does not make sense from a physiological standpoint. Moreover, it would probably result in massive over-fitting to the pre-CRT maps with lower predictive power.

Keeping this in mind, we first tried to optimise a global speed for the whole domain, but also tried by individualising:

both endocardia to capture both the Purkinje network (PN) and the left bundle branch block influences,

both ventricle walls, for the same reason,

the septum, for the same reason and because propagation through the septum could be much slower due to fibre orientation,

the scar if present,

the 17 AHA segments of the left ventricle (LV), to determine if this would be beneficial for the personalisation.

As the optimisation process is reasonably fast with our framework, we decided to test several combinations of these “velocity zones,” as shown in fig. 24.

2.4.4 Onsets

Besides the local propagation velocity, the Eikonal model requires to specify starting points for the wave front propagation. Choosing such points for the pre-CRT sinus rhythm maps is not trivial at all.

2.4.4.1 Locations

Ideally, the simulated pre-CRT maps should use the atrio-ventricular (AV) node as unique onset. We first tried to parameterise our model in such a way, but this approach rapidly proved very inefficient due to the massive influence of the septomarginal trabecula (ST) in the activation of the right ventricle (RV). As a consequence, it seemed more reasonable to use two different onsets, both in the RV endocardial layer. Unfortunately, the pre-CRT maps did not include any EP study of the RV endocardial surface.

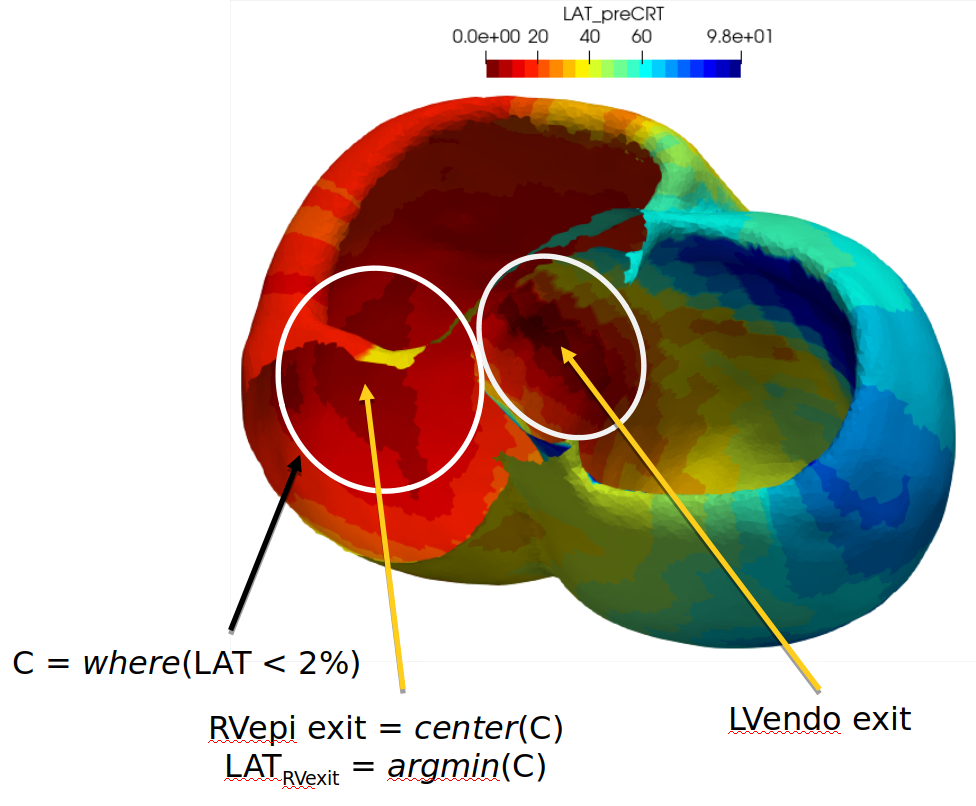

To overcome this limitation of the personalisation data, we chose the centre of gravity of all the points whose pre-CRT LATs were below the second percentile of a given area and picked the closest RV endocardium point. Picking up the point with the smallest LAT may sound more relevant, but because of the propagation spread, likely due to the PN, the simulations fit better using the “percentile” way (more on this in ) This was done for both the LV area, approximately locating the LV onset and the RV area, approximately locating the ST epicardial exit point, as illustrated in fig. 21.

2.4.4.2 Delays

To determine the delays associated to these onsets eq. 6 we proceeded as follows:

Run an Eikonal simulation (eq. 5) for each onset location.

Choose the delay such that the lowest LAT of the simulation match the data, respectively for the LV endocardium (LV onset) and the RV epicardium (ST epicardial breakthrough).

2.4.4.3 Radii

Picking unique points for the onsets caused the optimisation to converge on unrealistically fast velocities to compensate for the spreading of the early activation due to the PN. It seemed logical to overcome this difficulty by “dilating” our onsets. To chose the radius of these dilations, we conducted the following study:

We fixed the endocardial velocity as 3 m/s and scar velocity at 0.1 m/s.

We experimented a wide array of velocities for the rest of the domain, between 0.5 and 4 m/s.

For each velocity, we tested different onset radii, between 0 and 30 mm.

We looked for the optimal myocardial velocity/onset radius combination, i.e., to combination that minimised the median square root error between the EP data and simulations.

The results of this study are shown on fig. 22.

Empirically, we could determine that an onset radius of 10 mm for the pre-CRT simulations and 2 mm for the post-CRT simulations allowed physiology-compatible velocities.

2.4.5 Constraints

We had to define parameter bounds for the optimisation process. We experimented with 3 types of regional constraints:

“Physiological”: \(v_{scar} \in [0, 0.5]\), \(v_{wall} \in [0.5, 1]\), \(v_{endo} \in [1, 4]\)